In 2012 a dozen Australian universities will trial a system of assessing the impact of their research on the world outside academia as a possible means of directing funding. The pilot scheme is being led by the Australian Technology Network of Universities (ATN).

The ATN ran a similar trial in 2005 which informed the Howard government as it developed the Research Quality Framework (RQF), a proposed funding structure that aimed to reward research that had broad social benefits. The RQF did not enjoy the support of the Group of Eight, an alliance of institutions that - in a field of about 40 - account for more than two thirds of the sector’s research activity, research output and research training. The Rudd government scrapped the RQF after winning office in 2007, yet the system inspired England’s new impact-measuring system. Under then-research minister Kim Carr, the ALP established Excellence in Research for Australia (ERA), a heavily metrics-based system supported by the Group of Eight.

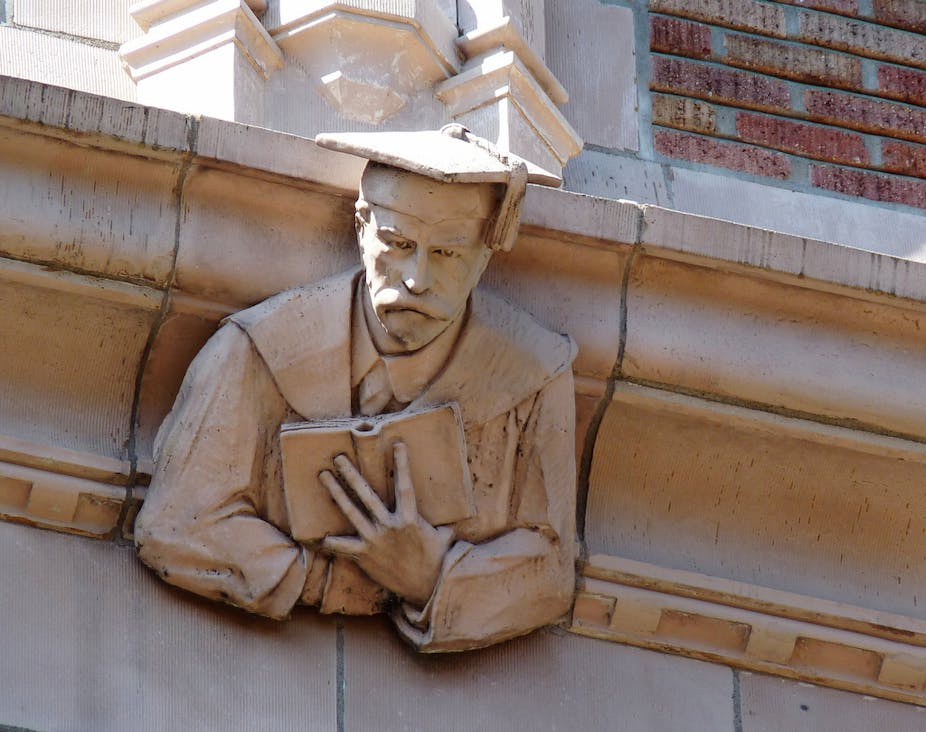

However, the Group of Eight is involved in the ATN’s new trial, and the alliance’s Director of Research, Dr Ian McMahon (a doctor of chemistry), speaks here:

Dr Ian McMahon, Director of Research, Group of Eight

Why is the Group of Eight involved, and what’s happening?

With ERA they had a close look at “research excellence”, but clearly there’s [now] general acceptance that we’d also like to know a lot more about the broader social, economic, and other benefits of research. We’re approaching the trial as an experiment in terms of trying to come up with, “Well, what measures are there of the benefits of research?” – whether you call it “research impact” or you use another name. It’s not just the commercialisation benefits [we’re looking at] but the social benefits, and benefits to welfare generally. We’ll look at possible measures and trial what’s feasible, including the case-study approach similar to what is being used by the Higher Education Funding Council of England.

We had a symposium and a lot of the case studies that were presented as part of the workshop discussion didn’t really contain enough information about the benefits.

The trial will have an advisory board that will help frame the exercise that will develop some of the indicators, as well as a steering group comprised of the Deputy Vice-Chancellors (Research) from the participating institutions. There are four Group of Eight Institutions that will participate in the trial: Western Australia, Melbourne, New South Wales, and Queensland. And then there will be an implementation group responsible for making sure it happens on the ground at each institution.

We’re very keen that it be seen as a separate exercise, a complementary exercise, to ERA. ERA is about excellence, which we don’t want to disturb, but clearly there is a need for some additional information.

Does less metrics-based assessment of research suit some disciplines more than others? Are some disciplines happier than others with the current system?

In terms of measures of excellence, it’s true that there are different measures that suit different disciplines and the ARC has recognised that. So for your traditional science disciplines citations are generally accepted as a reasonable measure of excellence. But because of the nature of publishing in the disciplines where there’s a preference for books - the humanities - that’s not the case, and therefore that’s not used by the ARC [the Australian Research Council, which administers ERA and allocates funding]. I think the humanities are broadly happy with the measures that the ARC uses for the humanities. They’re different to what are used in the sciences, and different to what might be used in IT. Conference papers become more of an important output [in the humanities]. So the ARC has recognised discipline-differences and is choosing the appropriate mix. You could always argue that there are better indicators, and fine, but they are [currently] the best that the ARC have been able to come up with. So in that sense there’s broad acceptance. But if you translate it, then to measuring impact and research benefits, you’ve got to remember that the difference in disciplines will apply here as well. So what’s right for engineering are often your commercialisation indicators: measures of patents and licensing fees and all those sorts of things. They may well be a good measure in engineering but are meaningless in humanities.

In developing the indicators you might use to measure the impact or benefits of research - such as in this trial - you’ve got to take discipline differences into consideration. Part of the trial will be about recommending what might be appropriate for engineering, the sciences, the social sciences and the humanities.

In the Group of Eight, we’re certainly very keen that the trial looks at a broad range of research disciplines. So it’s not just a focus on things were easily commercialisable, and therefore you’re going to need a broad range of indicators to capture the information. But it is a trial; if this was something that was easy it would already be done. So you couldn’t just use commercialisation indicators such as as patents or licensing fees, because they’re only appropriate to a small group of disciplines.

What do you think of the UK model?

It’s largely a case-study model, but it’s being done in quite a different context. As I understand it, each department puts in information about, say, their five best papers, and there’ll be other metrics that the department will have, and then there will be the sample case studies of the best impact stories from each of those departments. We’re looking a bit more broadly than that. Another difference is our trial is not part of ERA, so it will be a stand-alone exercise.

The REF [England’s new Research Excellence Framework] is quite different to what we have here at the moment. It’s organised by department rather than by field of research, so the department is submitting a portfolio of information by which its excellence can be judged and measurements [made] of research impact. It’s hard to argue [in favour of one over the other] because we have such different systems. What they’re doing with impact in REF is an integral part of what they’re doing; you couldn’t take that out and just put it into an Australian context, which is part of why we have to design something from scratch for here.

The symposium was about setting the scene and working through the trial timetable. We would aim to conduct the trial after the ERA submissions are in - from about May next year with the aim of completing it and having the results written up by about November. Obviously, before May there’ll be a lot of work by the advisory board and implementation group on developing what it is that trial would actually ask for, and all the paperwork and documentation associated with that.

Minister Carr put out a press release on the Thursday before the symposium saying that the Department [of Research, now in Chris Evan’s portfolio] would undertake a feasibility study of the research impact of publically funded research. I could see that what we’re doing as a trial might help inform what they’re doing, but they no doubt would want to explore other things as well and obtain information from other people as well about possible measures of research impact. Hopefully, what we and the ATN are doing together as a trial will help inform that process. Whether it’s adopted remains to be seen, because it still depends on the result of that trial.

Are patents and licensing fees counted in ERA’s current measures of excellence?

There are a very small number of commercialisation outputs that are included in ERA. In terms of “research excellence” it’s probably not appropriate including a lot more because it’s measuring excellence not impact. A patent per se doesn’t actually tell you a lot. I could make a discovery, and I could get a patent for it: that doesn’t actually tell you a lot, because you can patent all sorts of things. Whether it ultimately has an impact is another matter, and if you’re looking at impact - which we’re not doing as part of ERA - but if you were including commercialisation measures in an impact study, you’d want to see what came out of that patent. Did it actually lead to a commercialisable product? Was it patented worldwide, or not in the US? It costs a lot of money to maintain your patents at an international level and to defend them if necessary, and you ultimately do that based on commercial decisions as to whether the patent’s going to lead to a commercialisable product.

Who is driving the current push for impact measures - is it more in the favour of certain universities or disciplines?

Every university has a different mix of disciplines and some disciplines have clearer impacts than others. But all universities would like to be able to have strong evidence for the benefits of their research. If you look at CSIRO over the years, it’s become much better at educating the Australian public about the benefits of the research it does and therefore about the investment in research. All universities would like to obtain stronger evidence that way as well, because it would be seen as helping to increase their standing in the community.

The Group of Eight institutions are very broadly based institutions. They even have large medical schools, large engineering schools, large professional faculties in veterinary science and agriculture, etcetera. So the Group of Eight certainly believes that when we sit down, if we are able to determine a way of measuring impact, the Group of Eight will show up very clearly as being very strong in terms of the benefit that comes from research.

What do you make of the claim by Peter Shergold that to encourage researchers to get involved in public policy, we need a system that recognises and rewards social impact?

First of all, you’d have to find ways of measuring the impact in such a way as you could divine the incentive of direct involvement with policy. We would argue that there is a strong link anyway between research excellence and policy impact. In other words, who is your best economist? Who are your best people engaged in Asia-Pacific policy who are going to have the strongest impact on policy development in the government?

I worked for many years at the Australian National University, and if you look at the economists and the people in the research school of the Asia-Pacific, besides having strong research excellence, they also have very strong policy input to government. That was part of the driving force behind why the ANU was established in the first place, and why, going back to just after the Second World War, the government decided to fund the research school of the Asia-Pacific studies and the research school of social sciences at the ANU.

How do you look back on the Howard government’s RQF?

At the time, the Group of Eight had a lot of problems with the RQF, and certainly with ERA many of our concerns at the time were addressed. We’re comfortable with what’s come out of ERA and the results that have come from it. A lot of our concerns were around the structure of the RQF and what was being proposed to be measured. The use purely of case studies for impact was a concern. Overall, ERA has turned out to be very much better at handling excellence than RQF would have been. As part of our trial, the terms of reference do provide to look at measures other than case studies and what was being proposed for the RQF.

Comments welcome below.