Clinical trials have been the gold standard of scientific testing ever since the Scottish naval surgeon Dr James Lind conducted the first while trying to conquer scurvy in 1747. They attract tens of billions of dollars of annual investment and researchers have published almost a million trials to date according to the most complete register, with 25,000 more each year.

Clinical trials break down into two categories: trials to ensure a treatment is fit for human use and trials to compare different existing treatments to find the most effective. The first category is funded by medical companies and mainly happens in private laboratories.

The second category is at least as important, routinely informing decisions by governments, healthcare providers and patients everywhere. It tends to take place in universities. The outlay is smaller, but hardly pocket change. For example, the National Institute of Health Research, which coordinates and funds NHS research in England, spent £74m on trials in 2014/15 alone.

Yet there is a big problem with these publicly funded trials that few will be aware of: a substantial number, perhaps almost half, produce results that are statistically uncertain. If that sounds shocking, it should do. A large amount of information about the effectiveness of treatments could be incorrect. How can this be right and what are we doing about it?

The participation problem

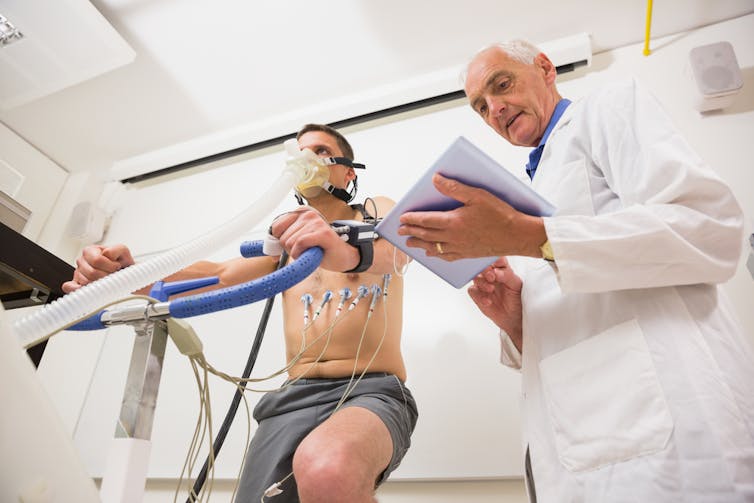

Clinical trials examine the effects of a drug or treatment on a suitable sample of people over an appropriate time. These effects are compared with a second set of people – the “control group” – which thinks it is receiving the same treatment but is usually taking a placebo or alternative treatment. Participants are assigned to groups at random, hence we talk about randomised controlled trials.

If there are too few participants in a trial, researchers may not be able to declare a result with certainty even if a difference is detected. Before a trial begins, it is their job to calculate the appropriate sample size using data on the minimum clinically important difference and the variation on the outcome being measured in the population being studied. They publish this along with the trial results to enable any statisticians to check their calculations.

Early-stage trials have fewer recruitment problems. Very early studies involve animals and later stages pay people well to take part and don’t need large numbers. For trials into the effectiveness of treatments, it’s more difficult both to recruit and retain people. You need many more of them and they usually have to commit to longer periods. It would be a bad use of public money to pay so many people large sums, not to mention the ethical questions around coercion.

To give one example, the Add-Aspirin trial was launched earlier this year in the UK to investigate whether aspirin can stop certain common cancers from returning after treatment. It is seeking 11,000 patients from the UK and India. Supposing it only recruits 8,000, the findings might end up being wrong. The trouble is that some of these studies are still treated as definitive despite there being too few participants to be that certain.

One large study looked at trials between 1994 and 2002 funded by two of the UK’s largest funding bodies and found that fewer than a third (31%) recruited the numbers they were seeking. Slightly over half (53%) were given an extension of time or money but still 80% never hit their target. In a follow-up of the same two funders’ activities between 2002 and 2008, 55% of the trials recruited to target. The remainder were given extensions but recruitment remained inadequate for about half.

The improvement between these studies is probably due to the UK’s Clinical Trials Units and research networks, which were introduced to improve overall trial quality by providing expertise. Even so, almost half of UK trials still appear to struggle with recruitment. Worse, the UK is a world leader in trial expertise. Elsewhere the chances of finding trial teams not following best practice are much higher.

The way forward

There is remarkably little evidence about how to do recruitment well. The only practical intervention with compelling evidence of benefit is from a forthcoming paper that shows that telephoning people who don’t respond to postal invitations, which leads to about a 6% increase in recruitment.

A couple of other interventions work but have substantial downsides, such as letting recruits know whether they’re in the control group or the main test group. Since this means dispensing with the whole idea of blind testing, a cornerstone of most clinical trials, it is arguably not worth it.

Many researchers believe the solution is to embed recruitment studies into trials to improve how we identify, approach and discuss participation with people. But with funding bodies already stretched, they focus on funding projects whose results could quickly be integrated into clinical care. Studying recruitment methodology may have huge potential but is one step removed from clinical care, so doesn’t fall into that category.

Others are working on projects to share evidence about how to recruit more effectively with trial teams more widely. For example, we are working with colleagues in Ireland and elsewhere to link research into what causes recruitment problems to new interventions designed to help.

Meanwhile, a team at the University of Bristol has developed an approach that turned recruitment completely around in some trials by basically talking to research teams to figure out potential problems. This is extremely promising but would require a sea change in researcher practice to improve results across the board.

And here we hit the underlying problem: solving recruitment doesn’t seem to be a high priority in policy terms. The UK is at the vanguard but it is slow progress. We would probably do more to improve health by funding no new treatment evaluations for a year and putting all the funding into methods research instead. Until we get to grips with this problem, we can’t be confident about much of the data that researchers are giving us. The sooner that moves to the top of the agenda, the better.