The plan to use the Australian Bureau of Statistics to conduct the federal government’s postal plebiscite on marriage reform raises an interesting question: wouldn’t it be easier, and just as accurate, to ask the ABS to poll a representative sample of the Australian population rather than everyone?

Given that the vote is voluntary and non-binding, its sole purpose appears to be to find out what Australians actually think of the idea. On the face of it, conducting a sample survey sounds like an easy and cost-effective alternative to the A$122 million postal vote. Or is it?

A margin of error

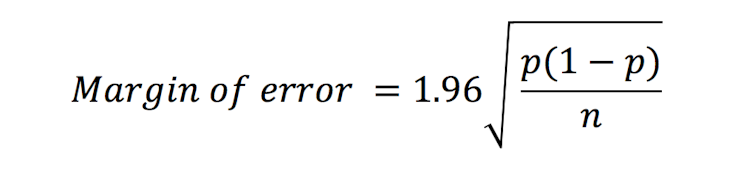

Survey sampling experts use mathematical formulae to compute a margin of error for their polls. This reflects the variability due to the fact that we are dealing with a statistical sample, not the whole population.

Read more: When it comes to same-sex marriage, not all views deserve respect

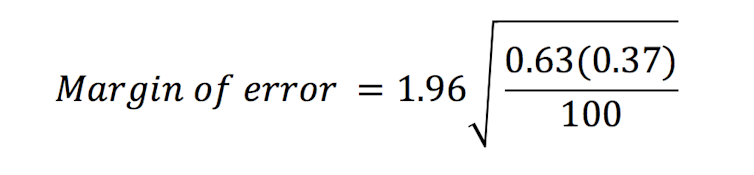

For example, suppose we find that 63 of a simple random sample of 100 people say they believe in marriage reform. Skipping over a few technical nuances for ease of discussion, your statistician friend might help you to compute an associated margin of error.

There is a simple way to approximate what that margin of error is, by using the following formula:

So in our case p is 63% (or 0.63) and n is 100. Entering this into the formula gives us:

Crunching the numbers and rounding up gives us a margin of error in this case of about +/-10%.

So you could then say something like “we are 95% confident that between 53% and 73% of the Australian population believes in marriage reform”. Clearly that’s a large margin of error and a larger sample would allow us to report a tighter range.

If our survey were based on 1,000 people then the margin of error would reduce to +/-3%, which is what you get in many political opinion polls reported in the media.

Such a margin of error would be ok if your sample survey result shows something like a 63%/37% split on the issue, because in this case the 3% margin of error would not alter the result. But what if the result was 52% to 48%?

So conducting a reliable survey is simply a matter of ensuring a large enough sample size to guarantee a suitably small margin of error. Easy, right? Unfortunately, there is much more to the story.

Selecting a sample

Another fundamental thing to consider is how you actually go about recruiting the people in your survey.

You could just post your survey on the internet and then sit back and wait for people to respond. The problem is that you don’t know who is going to respond.

You could get a listing of telephone numbers, randomly select people from that, then call them and ask the question. But most people I know don’t even have a landline, and I know how most of us respond when we get one of those annoying computerised calls on the mobile asking us to “please participate in a short survey”.

The spectacular failure of the Literary Digest weekly journal to predict the outcome of the 1936 US presidential election illustrates the danger of relying on telephone listings to identify potential survey participants.

Even though it used a sample size of about 2.4 million people, a fundamental problem was that back in 1936, telephones were still new and very much a luxury item, particularly in the wake of the Great Depression.

So a sample drawn from telephone listings was biased towards wealthier members of the population, who had different voting habits to the poor and disenfranchised, who were underrepresented in the phone poll.

Drawing their sample from a listing of people who owned a telephone thus broke the cardinal law of sampling, which is to ensure that the sample is representative of the population of interest.

Getting the right sample

A much better strategy is to draw your survey from a listing that is guaranteed to include pretty much everybody, such as the electoral roll. A survey-sampling expert would advise you not to do a simple random sample from the listing, but rather to use some clever strategies to improve your chances of getting a representative sample.

So-called stratified sampling targets survey participants according to characteristics such as age and gender. Multistage sampling strategies might first select from a listing of possible geographical areas, and then sample individuals who live in those selected areas.

While it is a bit more complicated to compute the associated margin of error with such strategies, you can be more confident that your final sample is truly representative of the population.

The problem of no-shows

Once you are confident of your survey design, your next challenge is to take account of the fact that some people will inevitably fail to respond. Unlike a compulsory census or election where people are required to respond by law, sample surveys are notorious for the problem of non-response.

Some people may be on holiday and never even see your survey request. Others might see your request, but are uninterested. Others may be interested but too busy or distracted to take part.

Some may be well-intentioned and even fill out the survey you sent them, but lose the envelope before managing to get it to a mailbox. Depending on how diligent you are in chasing up people who don’t respond to your survey, you could easily end up with a scenario in which only 10% of those you were targeting actually respond to your survey.

As long as the chances of non-response are the same for everyone, this is not necessarily a disaster. You can, for example, reflect the reduced sample size in your margin of error calculation.

If you took your statistician friend’s advice, you would probably have even boosted your initial survey target number in anticipation of non-response. In practice, however, non-response rates tend to vary a lot from person to person.

If the same factors also influence how people vote, then you are in a situation where the results of your survey can be seriously biased.

For example, suppose that older Australians are more likely to respond to the survey than younger Australians. Older Australians might also be more likely to vote against marriage reform than younger Australians. This means that the overall survey results will be biased towards the opinions of the older Australians and consequently underestimate the overall proportion of Australians who are in favour of marriage reform.

Weighting your result

There are some clever sample reweighting strategies that can be used to account for non-response rates that vary according to age, sex and other measurable characteristics.

A more insidious problem occurs when a person’s opinion on the question of interest influences their decision on whether or not to respond to the survey. This so called “informative non-response” tends to be a high risk in settings where emotionally charged questions are being asked.

Read more: Using the ABS to conduct a same-sex marriage poll is legally shaky and lacks legitimacy

So using sampling survey methodology to determine the proportion of Australians who believe in same-sex marriage would be a challenge fraught with many of these issues.

That leaves us back with the ABS and the voluntary, non-binding postal survey of everyone on the electoral roll.

The ABS will be able to adjust for some of the inevitable challenges associated with non-response, as long as it also collects relevant demographics such as age, gender, area of residence and so on.

But in a scenario where the results are close to the 50/50 line, I feel it will be a daunting task to deduce how the country really feels about this important question.