Articles on Deepfakes

Displaying 1 - 20 of 66 articles

There’s no escaping generative AI as it infiltrates our workplaces and daily lives. Learning what these tools can do will help you understand their full impact.

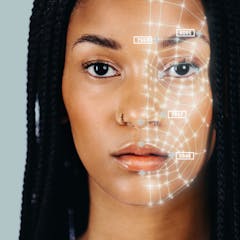

New research found a way to both improve the accuracy of deepfake detection algorithms while also enhancing fairness.

The invisible threat of deepfake porn now pervades the lives of women and girls

Deepfake pornography raises questions about consent, sexuality and representation. The issue is more complicated than online misogyny — new criminal laws are not our best response.

As technology has advanced, AI-generated deepfakes have become more convincing.

Using disinformation to sway elections is nothing new. Powerful new AI tools, however, threaten to give the deceptions unprecedented reach.

Catherine is far from the first royal to experiment with photo editing.

The effects of AI’s growth on global security could be difficult to predict.

Low tech or hi-tech, the next year will determine how much action nations take on election interference.

Deepfake scams are on the rise – but can their victims claim compensation? The legal landscape is still developing.

Deepfakes are on the rise in South Africa and many people seemingly struggle to spot them.

With so much advice available, how are we still getting scammed? It’s because cybercriminals use sophisticated psychological techniques to trick us and wear us down.

Disinformation experts, Lilik Mardjianto and Nuurrianti Jalli, tell The Conversation Weekly podcast about the deepfakes circulating ahead of the Indonesian election.

Deepfake technology is widely available, and a pivotal election year lies ahead. The FCC banned AI robocalls, but AI-enhanced disinformation campaigns remain a threat.

The proliferation of non-consensual, sexualised deepfake images is a reflection of society’s negative attitudes towards women.

There’s nothing surprising about the fake explicit images going viral. It happens to women celebrities frequently – but anyone can be targeted.

Youth in a study went from being passive deepfake bystanders to developing a sense of responsibility and readiness to help prevent deepfakes’ spread.

Deepfake technology is widely available, and a pivotal election year lies ahead. The fake Biden robocall is likely to be just the latest of a series of AI-enhanced disinformation campaigns.

Artificial intelligence is everywhere, and the tech industry is racing along to develop ever more powerful AIs. Three scholars look ahead to the next chapter in this technological revolution.

Understanding how deepfakes can be used as a tool for misogyny is an important first step in considering the harms they will likely cause, including through school cyberbullying.