Most of us want our lives to have meaning. But what do we mean by meaning? What is meaning?

These sound like spiritual or philosophical questions, but surprisingly science may be able to provide some answers.

It might not seem like the kind of thing that can be tackled using the detached and impersonal methods of science. But by framing the right questions, researchers in language, cognitive science, primatology and artificial intelligence can make some progress.

Read more: Careful how you treat today's AI: it might take revenge in the future

Questions include:

- how do words or symbols convey meaning?

- how does our brain sort out meaningful information from meaningless information?

These are certainly difficult questions, but they’re not unscientific.

Mind your language

Take human language. What distinguishes it from communication used by other animals such as the sign language we can teach to chimpanzees, bird calls and the pollen dances performed by bees?

One factor is the systems used by other animals are basically linear: the meaning of each symbol is modified only by the one immediately before it or after it.

For example, here’s a phrase in chimpanzee sign language:

give banana eat.

That’s as complicated as phrases get for chimps. The third word is distinct from the first, only joined by the second.

But in a standard sentence from any human language, the words at the end of a sentence can modify the meaning of those back at the start.

Try this:

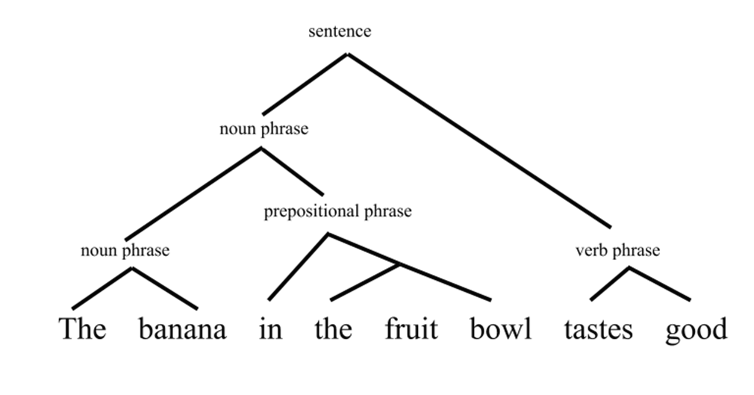

The banana in the fruit bowl tastes good.

The fruit bowl doesn’t taste good even though those words are adjacent.

We effortlessly sort out the meaning in sentences based on hierarchies, so that phrases can be nested in other phrases and it doesn’t cause any problems (most of the time).

Did you ever have to diagram a sentence while learning grammar in school? A sentence of human language has to be diagrammed in a tree-like structure. This structure reflects the hierarchies embedded in the language.

Cognitive scientist W. Tecumseh Fitch, an expert in the evolution of human language, says what separates humans from other species is our ability to interpret things in a tree-like structure.

Our brains are built to group things and to arrange them into hierarchies, and not just in grammar. This opens up a whole universe of meanings that we are able to extract from language and other sources of information.

But complex structure isn’t all there is to meaning. If you’ve seen any computer programming you know that computers can also handle this kind of complex grammar. That doesn’t mean computers find it meaningful.

Research into human brains is trying to find out how we find information significant. We attach emotional and semantic weight to the utterances we speak and hear. The neuroscience of working memory may hold some clues.

A memory of that

We need working memory to pay attention to those long sentences that have the complex grammar described above.

Working memory also helps us knit together the experience of waking life, moment to moment. We experience a vivid and comprehensible stream of consciousness, rather than staccato flashes of action.

One of the leading researchers in this area is the French neuroscientist Stanislas Dehaene. In his 2014 book Consciousness and the Brain: Deciphering How the Brain Codes Our Thoughts, he advocates what’s known as the global workspace theory

When something really grabs our attention, it’s elevated from being dealt with by unconscious, localised brain processes to the global workspace. This is a metaphorical “space” in the brain, where important signals are broadcast throughout the cortex.

Read more: Working memory: How you keep things 'in mind' over the short term

Roughly speaking, if a signal doesn’t get amplified to the global workspace then it stays local and our brains deal with it unconsciously. If information gets to the global workspace then we’re conscious of it.

Information from different sensory inputs — vision, hearing, touch — then gets put together to form an overall interpretation of what’s happening and how it’s meaningful to us.

Working together

Moving beyond an individual’s brain, a lot of work has been done in terms of social cognition. That is, how humans are particularly good at thinking together and cooperating.

Obviously that goes hand in hand with our more complex language. But there are other abilities that seem to have evolved alongside language that are also unique to humans and crucial for cooperation.

Michael Tomasello, director of the Max Planck Institute for Evolutionary Anthropology in Germany, has been studying chimps side by side with human infants for 25 years.

He emphasises the role of shared intentionality. From about age three, and unlike apes, human infants can easily, even wordlessly, cooperate on simple tasks.

To do so they have to monitor their own actions, the action of others, and both their actions in light of a shared goal or set of expectations.

This might not seem like a staggering result. But Tomasello argues this is essentially the origin of human morality. By adopting the perspective of shared intentionality, humans evolved norms or conventions that shape our shared behaviour.

This perspective allows us to evaluate actions and behaviour in broader terms than simply whether or not it provides some instant reward. Hence we can judge things as meaningful or not according to norms, values, morals.

But what does it all mean?

So complex grammar, working memory and cooperation are just three areas of research out of dozens that are relevant. But researchers from various disciplines are zeroing in on what meaning is at a very fundamental level.

It seems to be about the complexity of information, integrating information over longer periods of time and sharing information with others.

That might sound remote from questions like, “How do I make my life meaningful?” But the science does actually line up with the self-help books on this score.

Read more: Having a sense of meaning in life is good for you – so how do you get one?

The gurus say that if you can find some alignment in your past, present and future selves (integrating information over time) you’ll feel your life has meaning.

They also tell you it’s very important to be socially connected rather than isolated. Translation: share information and cooperate with others.

It’s not that science can tell us what the meaning of life is. But it can tell us how our brains find things meaningful and why we evolved to do so.