The idea that we should audit universities is, in many respects, a good one. It can be used to keep them accountable and it can be a driver of change. University rankings – such as those offered by Times Higher Education magazine and several national newspapers – offer an alluring map of the complex terrain of higher education.

But we should be wary of attempts to reduce a complex entity like a university – let alone the granular and changing experience of any particular student – to a set of scores. As with any exercise in measurement, our questions should not just be on what it tells us, but what it chooses to miss out too.

University rankings are a big and reasonably diverse business. THE produces a range of charts throughout the year: the top universities, the top new universities, the top universities according to reputation, region or subject. The Guardian does not include a measure of research, rather focusing on student experience, including graduate feedback and staff to student ratio. The Sunday Times does include research, based on official UK data, and folds in official data on “teaching excellence” as well as considering numbers of firsts and 2:1s awarded as well as drop out rates. The Times reflects student satisfaction, research, completion and, interestingly, money spent on facilities, with particular focus on library and computing spending.

In turn, university PR offices lap it up, happy to add some logo as a simple (some might say simplistic) way to express their worth compared to others. Newspapers also know how much easy copy they can generate from “X elite institution drops Y places of Z ranking” from the sort of rich graduates their advertisers want to see them attracting, and extensively cover each others’ league tables.

The Shanghai Jiao Tong University Academic Ranking of World Universities, published last week, attracts more attention than most. This chart of global university prowess started back in 2003 with the aim of helping Chinese universities rate themselves against the rest of the world. As with most, their publication is greeted each year with delight by those that score well and handwringing by those who don’t. But overall, Shanghai is something of a black box. When researchers put the methodology under scrutiny in 2007, they were unable to replicate the results.

Many other rankings systems do, increasingly, offer extensive details of their methods. But I’m not sure that excuses their existence. They still all play into an idea that universities can and should be ranked against each other which leaves me uncomfortable.

Fold in the Research Excellence Framework, the work of the Quality Assurance Agency for Higher Education, scrappier “consumer-lead” projects like Rate my Professor or targeted projects like People and Planet’s “Green League” and you can see why many in higher education feel over-audited. I don’t think we should be scared of studying ourselves though, or being put under scrutiny by others. Auditing is a highly political act; the trick is not to try to imagine we can de-politicises it with more, fewer or more open audits, but rather to check the processes fit our politics.

As sociologist of education David Gilborn has eloquently argued, the criteria used to measure success in education often embody the perspectives and assumptions of those who already hold various forms of social privilege, and so act as a powerful force for legitimating and extending existing inequalities, be they of colour, gender, class or in terms of special educational needs. Because yes, Professor Dawkins, Trinity College Cambridge has a lot of Nobel Prizes, but it’s also the richest of the Oxbridge colleges, and we should probably unpack not only how this may have an impact on staff and student success but also how such bodies shape our very ideas of what successful counts as.

A key problem is the competitive nature of the rankings. Universities play games to outdo each other on rather arbitrary – or at least unhelpfully abstracted – tasks rather than exploring deeper issues within their own systems, all too often relying on short-term, cosmetic adjustments. I was pleased, for example, to see pressure from publicity surrounding the National Student Survey scare one university into enforcing speedier turn around on marking coursework. But it needed to be accompanied by changes in staff workload and broader cultural changes to focus attention on students; otherwise the result is just rushed and shallow feedback.

More broadly, the competitive nature of university rankings reflects the weird game of snakes and ladders we have somehow let education become; where this concept of “social mobility” is largely a matter of climbing some imagined linear hierarchy rather than more social or personal change. And that seems wasteful to me, because it forgets the worth of those people and institutions left below. It also seems plain unimaginative in terms of the possible transformational power of higher education.

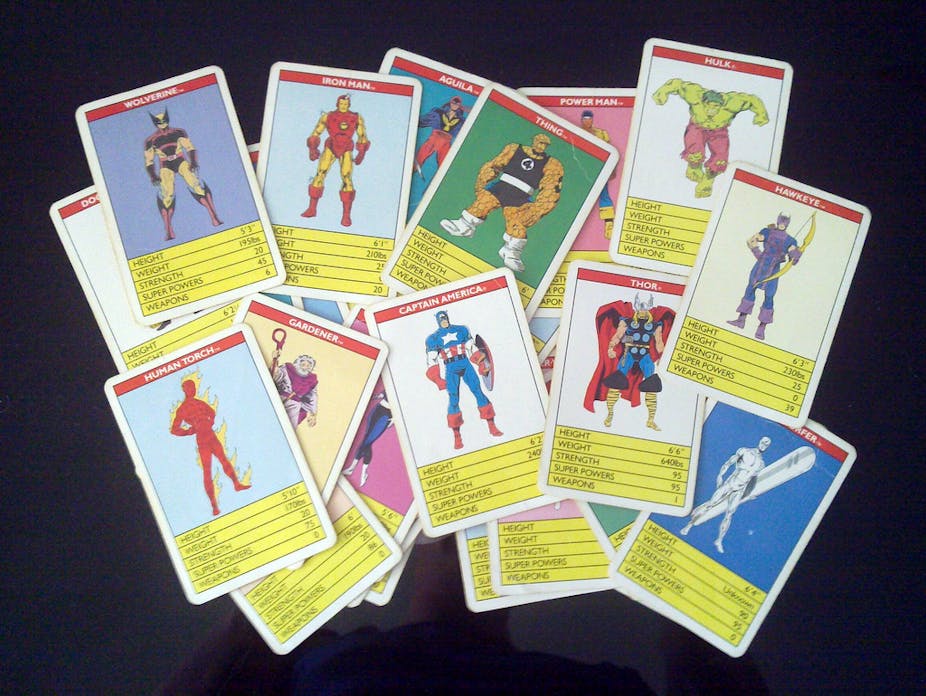

By all means ask questions about higher education institutions, but resist the lure of the ranking tables. It’s university, not Top Trumps.