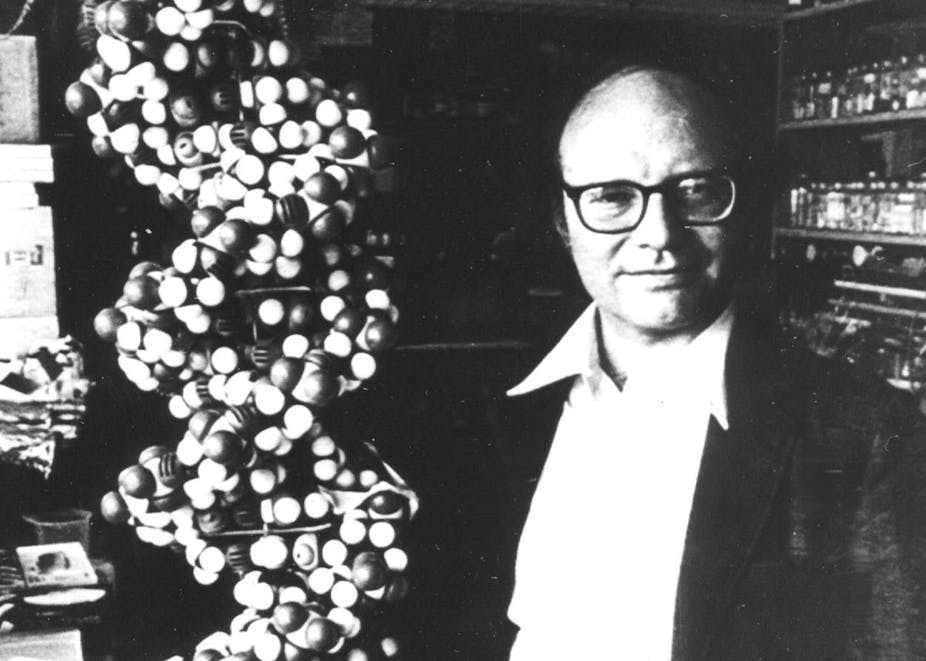

Walter Gilbert won the Nobel Prize in 1980 in Chemistry for his contribution to sequence DNA, or “determination of base sequences in a nucleic acid”. Mohit Kumar Jolly, researcher at Rice University and contributor to The Conversation, interviewed him at the 2014 Lindau Nobel Laureates Meeting.

You received the Nobel Prize for DNA sequencing. When do you think we will be able to get our genomes sequenced cheaply?

Sequencing is definitely becoming cheaper and more accessible. One can sequence a couple of full genomes today for less than US$50,000. In 1985, human DNA sequencing cost was thought to be around US$3 billion. I hope that by 2020, drug stores can do genome sequencing for a few hundred dollars.

But, one must be careful – whole genome sequencing is not at all accurate for medical diagnosis. I got my own genome sequenced, but they missed the local rearrangements in my genome – it was not well-curated.

Also, it is common belief that once we can sequence the genome, we can edit it to have babies with higher IQ for example. This is a myth, because it is very rare that one gene corresponds to one property.

What do you think about the prospects of personalised medicine?

I support the cause of personalised medicine. I believe that it has two underlying themes – each one of us has different metabolism and each one of us has a different manifestation of the same disease. My cancer is not the same as your cancer, so the only way one can categorise ultimately is to have a limited number of subtypes and then develop drugs against those subtypes.

But as you can see, big pharma companies of course do not want people to believe in personalised medicine. Otherwise how would they sell their generic drugs? I don’t understand why they don’t realise that clinical trials get easier and much cheaper with subtyping – they do not play this market game well.

What are your views on “big data”?

Big data promises to collect large sets of data and find associations between genes and diseases. There’s definitely something useful in the data collected, but the danger is that we have no clue how to interpret it. Also, you must remember that all statistically significant things are not biologically significant. So, it is definitely not a panacea.

There was a recent controversy about patenting genes. What did you make of that?

I agree with the US Supreme Court decision that one cannot patent anything that exists naturally. Since a gene is a part of the genome, I don’t think one should be allowed to patent it. But companies are allowed to patent some genetic tests that identify risks for certain diseases based on one’s genes.

What problems does science face today?

We are spending money on problems that can have immediate outcomes. Then we are forced to use only our current level of understanding. There is a lot that what we don’t know. Imagine that if we had asked Benjamin Franklin to justify the importance of the “spark” he had found, would we have had electricity today?

Another major problem is the explosion in scientific manpower that has not necessarily led to the betterment of science, especially in biology. In fact, bad material that gets published has increased. In biology, the top journals – Cell, Science and Nature – have created a mess. They tell the authors “give me the headline, not the data”. And then we see retractions and shattered careers and dreams.

What advice would you like to give to young scientists?

Do not blindly believe whatever you read. I often used to give my students papers that said opposite things and then tell them to explain to me how they were consistent, if at all. Also, do not continue science if it does not excite you. Science cannot be a nine-to-five job.