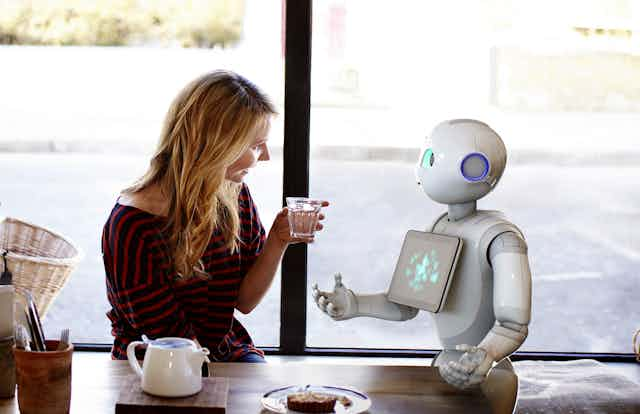

The Japanese robot Pepper, made by Aldebaran Robotics, has sparked interest regarding the potential of robots to become companions.

According to its makers, Pepper can recognise emotions from your facial expressions, words and body gestures, and adjust its behaviour in response.

This heralds a new era in the development of sociable artificial intelligence (AI). But it raises the inevitable question as to whether AI is ever likely to be able to understand and respond to the experiences of humans in a genuine manner, i.e. have empathy.

In their shoes

In order to answer this question, it is important to consider how humans experience empathy. In general, there seem to be two kinds. The first is “cognitive” empathy, or the ability to judge what another person might be thinking, or to see things from their point of view.

So, if a friend went for two job interviews and was offered one job but not the other, what might you be expected to think? Should you be pleased or disappointed for them, or see it as a non-event? Your response to this depends on what you know of your friend’s goals and aspirations. It also depends on your ability to put yourself in their shoes.

It is this particular ability that may prove difficult for a man-made intelligence to master. This is because empathic judgements are dependent on our capacity to imagine the event as if it were our own.

For example, fMRI scans have shown that the same region of the brain is activated when we are thinking about ourselves as when we are asked to think about the mental state of someone else.

Furthermore, this activation is stonger the more similar we see ourselves to be to the person we are thinking about.

There are two components here that would be hard to manage in a robot. Firstly, the robot would need to have a rich knowledge of self, including personal motivations, weaknesses, strengths, history of successes and failures and high points and low. Second, its self-identity would need to overlap with its human companion sufficiently to provide a meaningful, genuine shared base.

Share my pain

The second kind of empathy allows us to recognise another’s emotional state and to share their emotional experience. There are specific cues that signal six basic expressions (angry, sad, fear, disgust, surprise and happy) that are universal across cultures.

AI, like Pepper, can be programmed to recognise these basic cues. But many emotions, such as flirtation, boredom, pride, embarrassment, etc., do not have unique sets of cues, but rather vary across cultures.

Someone may feel a strong emotion but not wish for others to know, so they “fight against” it, perhaps laughing to cover sadness. Like with cognitive empathy, our own experiences and ability to identify with the other person will assist in identifying subtle and complex feelings.

Even more challenging for AI is the visceral nature of emotion. Our nervous system, muscles, heart rate, arousal and hormones are affected by our own emotions.

They are also affected by someone else’s when we are empathic. If someone is crying our own eyes may moisten. We cannot help but smile when someone else is laughing. In turn facial movements lead to changes in arousal and cause us to feel the same emotion.

This sharing may be a way of communicating our empathy to another person. It may also be critical to understanding what they are feeling.

By mirroring another’s emotions, we have insight by subtley experiencing the emotion ourselves. If we are prevented from mimicking, say by clenching a pencil between our teeth while watching others, our accuracy in identifying their emotion decreases.

Some robotic research has used sensors as a means to detect a human’s physical emotion responses. The problem is, once again, constellations of physical changes are not unique to particular emotions. Arousal may signal anger, fear, surprise or elation. Nor is recognising the physical state of another the same as empathic sharing.

Feeling it

So are empathic robots coming? New advances in computer technology are likely to continue to surprise us. However, true empathy assumes a significant overlap in experience between the subject of the empathy and the empathiser.

To put the shoe on the other foot, there is evidence that humans smile more when faced with a smiling avatar and feel distress when robots are mis-treated, but are these reponses empathic? Do they indicate humans understand and share the robot’s internal world?

The world of AI is changing rapidly, and robots like Pepper are both intriguing and potentially endearing. It is difficult to predict where advances like Pepper will lead us in the near future.

It may be, for example, that “near enough is good enough” when it comes to everyday empathy between human and machine. On the other hand, we are still a long way from fully understanding the complexities of how human empathy operates, so are still far from being able to simulate it in the machines we live with.