An article published in the British Medical Journal (BMJ) today says a US charity “overstates the benefit of mammography and ignores harms altogether.” The charity’s questionable claim is that early detection is the key to surviving breast cancer and to support this, it cites a five-year survival rate of 98% when breast cancer is caught early, and 23% when it’s not.

We’re not interested in judging the charity’s actions or intentions but would like discuss the importance of statistical literacy in communicating medical risks.

There are two critical claims in the argument presented by the experts in the BMJ report – that routine breast screening results in high false positive diagnoses and that five-year survival rates are biased. It’s necessary to understand them both to be able to judge whether the statistics quoted by the charity are misleading.

False positive diagnoses

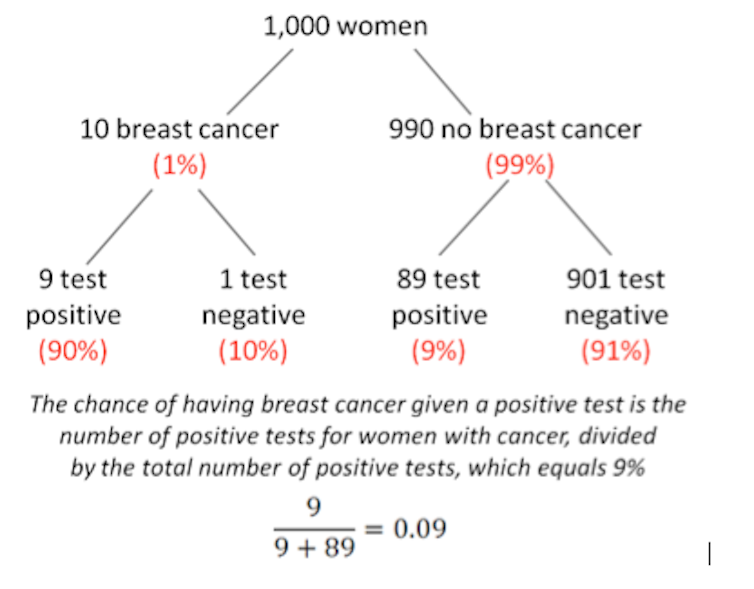

What would you think if your routine mammogram came back positive? Most women would justifiably fear the worst. And what you probably won’t be considering is the high false positive rate of screening tests (9%) combined with the low probability of breast cancer in the female population (about 1%, but note that this is different to lifetime risk, which is about one in nine). This combination means a lot of false diagnoses.

It’s important to remember that we are talking here about the outcomes of widespread screening in the absence of well-defined risk factors – not the screening of women in specific high-risk groups, defined by factors associated with age, genetic predisposition, exposure and lifestyle.

The statistics would be different for high-risk groups because the base rate of the disease will be different (higher). In the case of routine screening, however, positive diagnoses need to be treated with caution, and serious action should not be taken on the results of a screening diagnosis alone.

Five-year survival statistics

Imagine a group of women all diagnosed with breast cancer at the same time. The proportion of those still alive after five years is called the five-year survival rate. It’s calculated by dividing the number of women diagnosed with breast cancer still alive after five years, by the total number of women diagnosed with breast cancer.

Now imagine a random group of women, not defined by breast cancer diagnosis. The proportion of those who die within a 12-month period of breast cancer is called the annual mortality rate. It’s calculated by dividing the number of women who die of breast cancer within a 12-month period, by the number of women in the random group.

It’s often claimed that the five-year survival rate gives an inflated, or overly optimistic, picture of survival compared to mortality rates. This optimistic picture of survival comes from two sources of bias.

Lead-time bias

The first of these sources is known as lead-time bias. Imagine a woman who is diagnosed with breast cancer at age 67. She dies three years later at age 70. The five-year survival rate in this case is 0% – she survived only three years, not five.

Now imagine this same woman was instead diagnosed with breast cancer as a result of routine screening at age 60. She still dies at 70, but because she has survived ten years (rather than three), the five-year survival rate is 100%. Although the mortality age is exactly the same, the five-year survival rate is dramatically different.

Over-diagnosis bias

The other source of bias is called over-diagnosis. Over-diagnosis is not the same as false diagnosis, which we mentioned at the start of this piece. Rather, over-diagnosis refers to non-progressive cancers and “pseudo-disease”.

Pseudo-diseases are abnormalities that meet the technical definition of cancer, but are unlikely to ever cause symptoms, let alone death. Non-progressive cancers are unlikely to cause death within the five-year survival rate time frame.

How much over-diagnosis inflates the five-year survival rate depends on the type of cancer. For breast cancer, some estimates of pseudo-disease are as high as one-in-four of all diagnoses made by screening. For these women, a positive diagnosis may mean unnecessary chemotherapy, radiation or surgery.

Alternative measures

Critics of the five-year survival rate make two recommendations. The first is to report absolute risks (the risk of developing a disease over a period of time) rather than relative risks (compares risk in two different groups of people).

The BMJ article reports the absolute risk of a woman in her 50s dying from breast cancer over the next ten years as being reduced from 0.53% to 0.46% with mammography – a difference of 0.07 percentage points. This compares with the 25% relative risk reduction that is often cited in support of screening.

The second recommendation is to report risks in “natural frequencies” – in real numbers, like ten out of 1,000 (as shown in our figure above) rather than percentages and probabilities. There’s good empirical evidence suggesting the presentation of absolute risks in natural frequencies is a much clearer way to communicate medical risks to doctors and patients alike.

Improved statistical literacy about breast cancer screening is vital because it means that people can make informed decisions about screening and seek a second opinion if a test comes back positive.