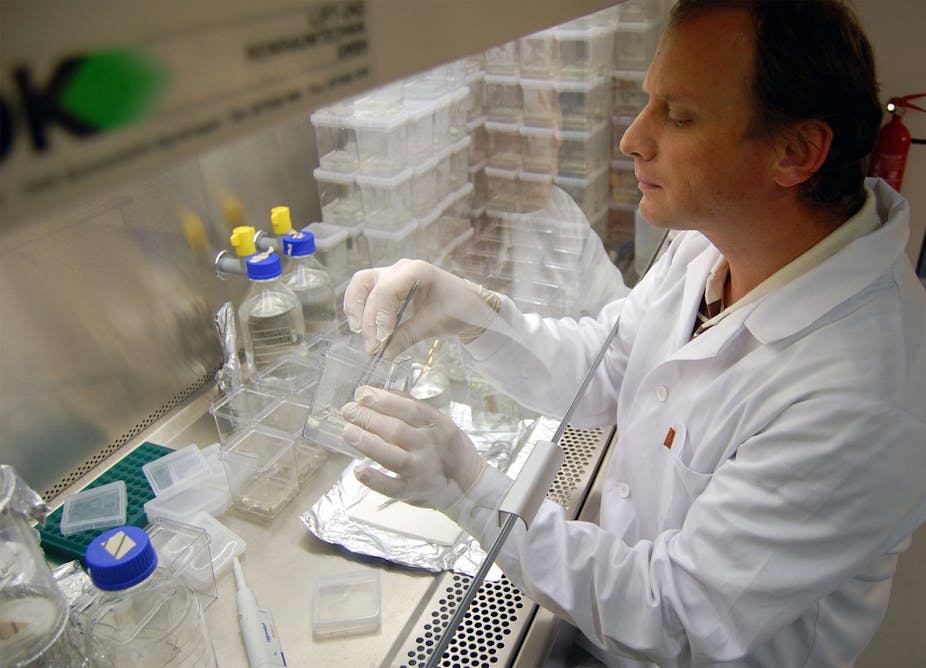

Two studies, one carried out in the Netherlands by Ron Fouchier and the other in Japan by Yoshihiro Kawaoka, are causing controversy over the creation of a new strain of H5N1 Avian Influenza or “bird flu” in ferrets.

The studies have resulted in a new strain of bird flu that’s easily transmissible through air between ferrets, whose reactions to influenza closely mirrors ours.

It’s claimed these studies could help develop new treatments against flu, assist in disease surveillance by tracking the evolution of the disease in the wild and prepare against a new pandemic.

But the existence of a new strain of airborne avian flu is deeply concerning; half of the 600 people infected with the wild strain died. An airborne strain could spell disaster if accidentally or intentionally released. This contest between the good and bad uses of the study is an example of what bioethicists call the “dual-use dilemma”.

Because of the risk of misuse, the National Science Advisory Board for Biosecurity (NSABB) has recommended that Nature and Science, journals who will be publishing the studies, partly censor them in an effort to prevent sensitive information from getting into the wrong hands.

The NSABB has no legal power to mandate this censorship,and this recommendation is the first of its kind.

Unsurprisingly, on top of the attention the studies have received, the decision to censor has become the subject of controversy. Some say it is “too little, too late” while others are arguing that secrecy won’t stop anyone who really means harm.

But more worrying is that such censorship runs against openness, a central tenet of science. And we risk not being prepared for a disease pandemic by depriving researchers of information they could use to protect us against both natural outbreaks and bioterrorism.

Questioning openness

We sacrifice openness for other gains all the time. Even though it reduces openness, and even if it were to slow progress, I doubt anyone would take me seriously if I suggested publishing the personal information of the subjects of human trials in medicine, for instance. Violating the privacy of individuals is just too much of a cost to bear in the name of openness or progress.

We are committed to reducing openness in the name of other important goals. What this conversation should really be about is where we draw the line between openness and other values. It’s a tough line to draw, but we’re already engaged in the processes of setting those limits even if we aren’t aware of it.

We could claim that we limit the ability of scientists to reproduce and verify the truth of the studies in question. But the National Institutes of Health are already working on a mechanism to release the information to a select few for verification purposes.

We could claim we lose out on valuable innovation. Innovation can strike out of the blue, and censorship means closing off those possibilities. Yet such benefits, however unknown, would have to be weighed against the risks of publication and accidental or intentional release of the virus. And the risk this poses is great indeed.

Science by humanity, for humanity

The gains we think we might garner are contingent on so many other factors. Managing disease pandemics depends on us developing new and improved vaccines, but that’s only a part of the puzzle. The larger problems are the social, economic, design and ethical components – the human factors. Managing pandemics, much like anything else in health, relies on managing complex social and environmental factors.

Without scientific advances, the benefits of studies like the bird flu study are limited. What good are new vaccines without the best possible understanding of where to dispense those vaccines? What if a city’s traffic congestion prevents access to points of delivery of medicines? What about in societies where a breakdown in day-to-day healthcare has left the citizenry distrustful about going to the health authorities to report their illnesses, thereby undermining disease surveillance? What about societies in which health education isn’t sufficient for individuals to manage complex medication schedules?

These aren’t all problems for the flu, and they aren’t problems for every society. But they are large, technically challenging, and ethically fraught issues. Dealing with them is our best chance for improving health and setting a responsible backdrop against which science can progress and bring the benefits it promises.

At the end of the day, everyone has to pitch in to solve these problems. Studies like this one may require censorship. But they also require us to remain committed to developing alternative measures of managing the risks of science, so that censorship stays a last resort.

Scientists have responsibilities towards ethics and safety. But they shouldn’t the only ones who bear those responsibilities. Safety in the lab should be mirrored in the world. We should do what we can to make sure our world is the best kind of place to bring this new knowledge into.

Demanding more accountability from governments and between states is important to prevent the misuse of the life sciences. As I write, the seventh review conference of the Biological and Toxin Weapons Convention is wrapping up in Geneva. This review process is mired in controversy and political stagnation. We don’t have a verification measure in place for biological weapons like we do for nuclear weapons, which makes the risks of state-sponsored bioterrorism and biological weapons programs higher than they have to be and censorship just that much more prudent.

Science is a global public good, and it is everyone’s responsibility, whether as scientists or citizens, to make sure we all get to enjoy it safely.