In a joint statement, ten editors representing some of the academia’s most prestigious journals for management, organisational behaviour and work psychology research, have vowed to publish research that fails to prove a hypothesis.

Their message: we will now publish good (that is, well-conceived, designed, and conducted) research even if proposed hypotheses are not supported or yield “null” results.

Why publish something that does not prove the hypothesis being put forward? It seems counter-intuitive. But the reasons are more complex than they seem.

Not all data is equal

Nobel Prize winning economist Ronald Coase is credited to have said: “If you torture the data long enough, it will confess to anything”. Indeed, evidence suggests that all disciplines of scientific literature are not free from bias and questionable practices such as selectively reporting hypotheses, excluding data points and variables post-hoc, and rounding “p value” - so non-significant results (arising by chance) become significant (that is, has a systematic effect).

While some statistical, analytical tweaks can be completely justified, especially when fully reported, the motivation underpinning more questionable practices stems from certain beliefs that to get published one requires a “tidy” story, and this demands “clean” results.

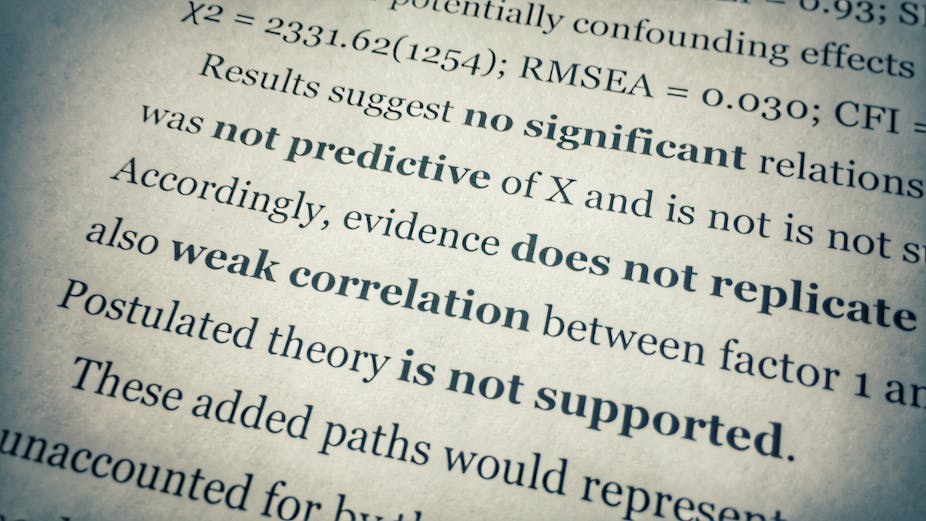

Those beliefs are not unfounded. There is evidence of severe replication challenges and that the extent of literature that is publicly available is not representative of completed studies on a particular phenomenon.

This publication bias has long been recognised and is often debated as consequences include overestimating of effect sizes (for instance, relationships appear more important than they are) and proliferation of theory and paradigms (a topic or explanation takes undeserved precedence over alternatives).

The publishing quandary

One dominant issue relates to the editorial review process and its implications. Journals seek to raise their impact so they become more attractive to future authors seeking to maximise the dissemination of their best research. A journal that publishes important research gets read and cited more, thereby increases its ranking in the competitive market of scientific outlets, and in turn receives more submissions to choose from.

In addition, evidence shows that journal editors and reviewers can be biased toward the publication of articles with statistical significant results, such as by being less critical of a study’s methodology when the majority of the results are positive.

Meanwhile, the majority of scholarly authors is evaluated against the impact factor of the journals they publish in, in academia this often determines nothing less than reputation, hiring decisions, promotion, tenure, research funding, and pay level. As individuals are highly incentivised to publish in the top journals, some may engage in questionable research practices to achieve that.

Taken together, positive, affirmative and neat research narratives appear more likely to get published and noted. If so, then the research evidence available might not just inform but also distort some of our work practices and organisational policies.

The new two-stage process

In the largest initiative to date in the organisational and managerial sciences, the ten journals will introduce and pilot a two-stage review process for empirical contributions.

In the first step, scholarly authors will present journal reviewers with an abbreviated paper comprising the theory, methodology, measurement information, and analysis plan but no results or discussion.

The semi-complete article will then be either rejected, receive a revise and resubmit - called an R&R - or be accepted in principle for publication. The latter decision will ultimately trigger a traditionally formatted manuscript that also includes results and discussion sections (Stage 2).

It’s a small change in editorial and review protocols but a large step for the scientific community, and ultimately everyone. Papers may now be evaluated on the merits, rigour, and quality of the project rather than what is actually found. It is the importance of the research question and the theoretical justification that counts, not whether it holds true.

Re-establishing trust

This alleviates a number of pressures. Early-career researchers especially are under considerable pressure to publish in top journals and it is tempting to opt for safer avenues instead of pursuing novel ideas with uncertain outcomes. Now, all researchers may simply discuss the theoretical and conceptual meaning and limitations of what was found and embrace what did and did not pan out. They subsequently can reinstate some authority for the scientific community and what it produces to contribute society.

It will also mean that they can opt to submit what might be called an “extensive research proposal”, explicating what will be researched, why this is important, and how it will realised. This allows reviewers to provide formative feedback about how to potentially enhance the planned study before researchers invest time and money into the collection and analysis of data, a perk typically reserved to PhD candidates through their supervisors.

Often journal reviewers provide very constructive feedback on methodology and making a stronger contribution, and under the traditional model this feedback can mean for researchers to repeat a study, opt for lower ranked journal that publish more limited research, or abandon publication altogether.

The new, two-stage approach thus affords wiser use of research resources, including tax funded grant money and survey respondents’ time.

Not only will the move help re-establish trust in managerial and organisational sciences that can ultimately affect possibly billions of workers, it also means that everyone has to accept that scientific inquiry like nature, is complex and messy.

It will be important to see how many researchers and journals indeed opt to publish null-findings, and whether and how that affects their impact factor and ranking over time.

It will be also interesting to see if the media reflects these changes and covers intriguing theory whilst narrating insufficient empirical support. And it will be crucial whether more scientific transparency and neutrality brings about a shift in managers adoption and interpretation of evidence.

For now we are offered an opportunity and we shall embrace and investigate the above – outcome unknown.

This article been altered since publication to correct a headline that inaccurately reflected the piece. The error was made by an editor.