The election of the Rudd Government in 2007 killed the momentum that had been building in the last few years of the Howard government for research valuation to move beyond metrics-based methods (such as counting peer-reviewed publications and citations) to a broader assessment that looked at the research’s impact on the world outside academia.

The incoming Minister for Research, Labor Senator Kim Carr, aborted the Coalition’s impact-inclusive Research Quality Framework (RQF), saying - among other things - that it “relies on an ‘impact’ measure that is unverifiable and ill-defined”.

Times have changed, however, and the push is back on, championed once again by the Australian Technology Network of Universities (ATN) - comprised of Curtin University of Technology; the University of South Australia; RMIT University; University of Technology, Sydney; and Queensland University of Technology.

The ATN ran a pilot of measuring impact in 2005 that fed into the RQF, and it will run another pilot next year - but this time in conjunction with the prestigious Group of Eight and a handful of other universities. The collective’s Joint Implementation Working Group will meet over the summer to plan a 12-university pilot scheduled for the second half of next year, with a report going to the Government in November 2012.

The test-run will be informed by the 2005 work and by the RQF’s English offspring - an impact-measuring now moving from pilot to policy. The UK’s program was overseen by David Sweeney, who addressed a joint ATN-Group of Eight symposium a fortnight ago.

Under the UK scheme (the Research Excellence Framework), academics were asked what influence and impact their research had had on society at large. A case study used was that of Dr Robert Mcfarlane, a writer, critic, and broadcaster who teaches in Cambridge’s English Department.

Rather than focus purely on the number of peer-reviewed publications and other in-house metrics, Dr. Mcfarlane’s impact statement made a much broader case:

Macfarlane’s research has also contributed to a resurgence of ‘nature writing’ in Britain. He has written introductions to eight topographical books and has been working with HarperCollins to create a library of classics from this tradition, each one chosen and introduced by him. The Guardian essay series Common Ground has been read over 200,000 times online and has inspired the founding of a new publishing imprint, Little Toller Books, dedicated to re-issuing ‘lost classics’ of the nature writing tradition.

Dr. Macfarlane’s impact report also shows the hefty international sales figures for his books (which include Mountains of the Mind), describes the reach he has achieved through BBC Radio and BBC Television, lists his literary awards and critical acclaim, and mentions the scores of public lectures he gives and hundreds of letters he receives each year from the public.

Here the national director of the ATN, Vicki Thomson, discusses the push for such a system in Australia:

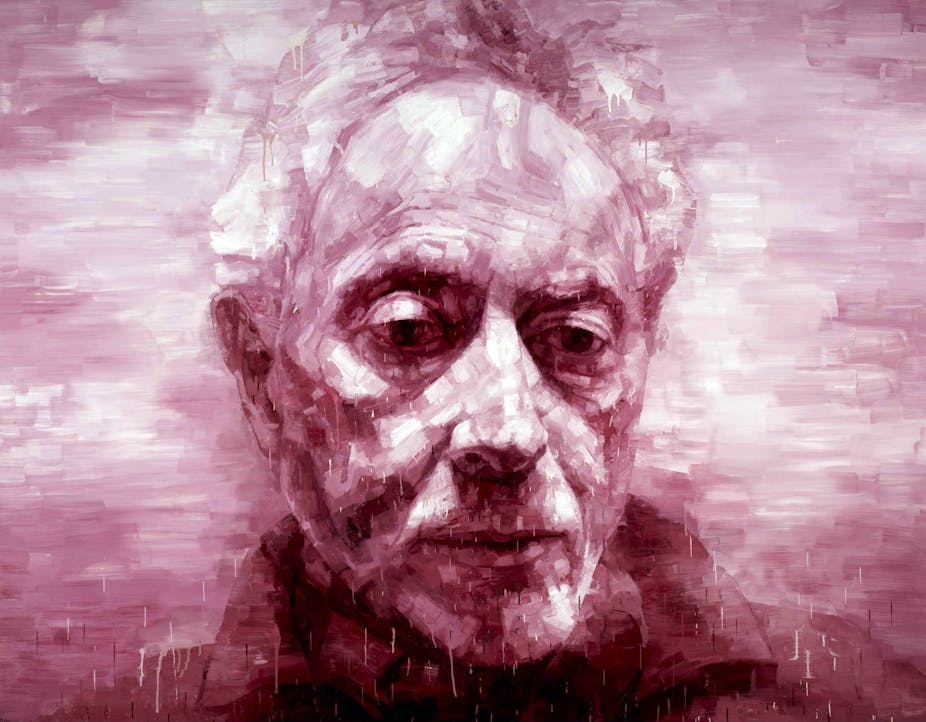

Vicki Thomson, National Director of the Australian Technology Network of Universities

Q. Was it disappointing when the RQF was scrapped?

Yes, very. To be fair, [Senator] Kim Carr was then shadow education minister, and he was never an advocate for the assessment of research impact. So we knew that if the government changed, then this would go with it. We think we did develop a fairly robust case for him. But he didn’t want to be part of that discussion.

The frustrating thing is we’d been to the UK at a time when they were saying, ‘No, we’re not interested in assessing research impact, but show us what you did anyway’, so we did, and guess what?

I was in the UK when they were doing their impact trials for the Research Excellence Framework and David Sweeney said ‘Look, I’m not quite sure why you want to meet with us because we’re basically looking at what you did and tweaking it for our circumstances.’

One of Kim Carr’s big arguments against assessing research impact was that it wasn’t internationally tested. Our argument against that was, ‘So, why can’t we be the first to market?’, and he said, ‘No, it can’t be,’ and he was quite keen on just [assessing] research quality and the usual metrics. So it was frustrating because we put a lot of work into it. But that’s the way the political cycle works and we’re pragmatists.

And then the UK did it, and guess what: now the Minister’s interested.

Q. Do you just start where you left off?

In 2011, now that we’ve got a government wanting to look at research impact, a lot of the work has been done through the trial we did back then, albeit the circumstances have changed and we’re not suggesting we just rip it out of the bottom drawer and say, ‘Here’s the answer.’

We accept that there’ll need to be modifications to what we did. But a lot of the discussions certainly around our five universities and with industry have already been taking place. It’s not brand new for us or for them.

We’re not certain what we’ll come out with. But what we’re going in with comes from saying, “Well, this is what we did with the RQF. This is what the UK’s done. Now what’s going to work for our context?” The difference from 2005 is that just the ATN did that trial, and now we’ve got a bunch of other universities with very different profiles, which is good. So that in itself will be challenging.

We’ve got a joint implementation working group with the 12 universities, and we’ve got a good advisory board, which includes Robin Batterham, and CSIRO, and representatives from the other universities, and Phil Clark who runs the EIF [Education Investment Fund] and is also an investment banker and was involved with the Business Council of Australia with the RQF - he’s a very strong advocate for research impact - and Laurie Hammond from Commercialisation Australia,

So that group we’re hoping to get together early in the new year where they’ll be the ones to say, “Ok, this is how we’re going to do this” – after we’ve done some work for them.

So that’s our next step in the process.

The challenge for us will be that the Minister is yet to be convinced that a case study approach is a robust method of assessment. His view is that they’re rortable and that they’re subjective.

One of the big discussions we had at the symposium and also with the advisory board was how important is that story telling of our research. And there was a view that we have to get the narrative right, and it has to be evidence-based. There’s no question about that. But obviously it’s not going to be the same indicators used to measure research quality. So what’s really important is that narrative - that’s the challenging bit. How do you write a good story around your research, which demonstrates the impact it had?

There’s no doubt that what we’re about to do is tricky. We’re not saying this is going to be a walk in the park. But these are the issues that were raised and they’re not new issues. They were raised at our symposium, they were certainly addressed in the UK, and we addressed them as part of our RQF.

Let’s just pretend that this all works out well and the Government says they’re going to do it. [Then] we don’t want the ARC [Australian Research Council]to do the assessment of research impact, because we think the ARC is well placed to do what it does, but not well placed to look at assessments from an industry perspective.

We want industry to be heavily involved in the assessment of this research, and at the moment end-users of research are not on the assessment panels at the ARC, so you’re assessed by your peers.

In the second half of the year, universities will be done with their reporting for ERA [Excellence in Research for Australia].

We’ll run our trial around June-July-August, and that will give us a couple of months to assess it and put a report together at a time when hopefully budgets are being developed federally, and there’s an election, potentially, the next year. The shadow universities minister, Brett Mason, spoke at the symposium, and he’s very in favour of it, and we’ve also got a Government that is quite interested in it.

So if ever there was a time to do it, we think this could be it.

Q. Have you encountered much resistance?

No we haven’t. David [Sweeney] did say that there was opposition [in the UK] from the purists, who don’t believe this is the way to go. That didn’t come out at our symposium. And we certainly gave plenty of opportunity for anyone who wasn’t in agreement with what we’re doing, with what proposing to do, or at a policy level, to open up that discussion. Whilst there were words of caution about how we do this, and it’s got to be robust, and we’ve got to make sure the narrative is right, there was no dissent at all.

I’ve been really surprised in the lead up to the symposium and since it, that I’ve only had positive feedback from a wide range of people. I’ve had no negative feedback. Whether they’re just holding their fire.

The fact that we’ve got the Group of Eight universities involved as well helps - they wanted to be involved. We would have done this anyway at ATN, and then the Go8 approached us to be involved.

That has certainly changed the dynamic. What you had in 2005 was really the Go8 and the more research-intensive universities - and I don’t want to speak on their behalf - probably not as engaged in the debate back then, and they were definitely not as engaged as they are now.

Q. Professor Peter Shergold has spoken about disincentives for academics to work in public policy. Would impact assessments change that?

The system at the moment is a disincentive for the research community to look at research for public policy, because the funding drivers are all looking at research quality. And we’re not saying you shouldn’t assess research quality - of course you should - but we think there’s a big gap, and that gap is driving behaviour, as Peter Shergold’s been saying. Research is going to go where the money is, in the main.

If you can assess research and verify it in a meaningful way, you may then direct a portion of funding to research that has an emphasis on impact, which then drives behaviour. It’s counterintuitive for government policy to be running a huge innovation agenda - it’s basically innovate or die in a global context - but [meanwhile] the drivers in Australia aren’t leading researchers down that path. Sometimes it happens serendipitously, but without researchers being led down that pathway [by funding incentives].

Q. The humanities don’t get much of a look under the Government’s National Research Priorities. How would they fit with your proposed system?

There is no doubt that the challenging area in assessing research impact is in this particular discipline space. It’s very easy to assess a medical outcome or something else tangible. But it’s far more challenging and difficult to assess research, which maybe there’s not a lot of citations and publications on, but it’s had an impact nevertheless in a different way.

As part of the Minister’s announcement last week, they are looking at [revising] these national research priorities. Our view would be that the humanities disciplines are absolutely integral to what our national research priorities should be. They are assessable: they are more challenging, we acknowledge that. But we will particularly be working around how we do assess them.

Often an excuse as to why you can’t assess impact is because you can’t assess the impact of research which may come out of those disciplines. We believe you can assess it – don’t ask me how yet because I’m not sure. But we believe you can assess it, whether through a case-study approach or a hybrid approach of the UK system, in a meaningful way.

Once you start doing that and once it starts getting greater recognition in that policy context, one would assume then that that’s got to be a good thing for the discipline.

Q. Could impact-measuring displace pure science and other disciplines that work well with metric-based research measures?

No. That’s where most of the research dollars will and should go. But there is a gap that’s not funded, and behaviour is being shifted and skewed one way. You have to have a rich tapestry of research. We’re certainly not advocating one or the other, but at the moment it is just the other, it’s not one. And we’re saying that has to change.

Comments welcome below.