Inviting artificial intelligence into our bodies has appeal – but it also carries certain risks.

I have often wondered what it would be like to rid myself of a keyboard for data entry, and a computer screen for display. Some of my greatest moments of reflection are when I am in the car driving long distances, cooking in my kitchen, watching the kids play at the park, waiting for a doctor’s appointment or on a plane thousands of metres above sea level.

I have always been great at multitasking but at these times it is often not practical or convenient to be head down typing on a laptop, tablet or smartphone.

It would be much easier if I could just make a mental note to record an idea and have it recorded, there and then. And who wouldn’t want the ability to “jack into” all the world’s knowledge sources in an instant via a network?

Who wouldn’t want instant access to their life-pages filled with all those memorable occasions? Or even the ability to slow down the process of ageing, as long as living longer equated to living with mind and body fully intact, as outlined in the video below.

Transhumanists would have us believe that these things are not only possible but inevitable.

In short: we Homo sapiens may dictate the next stage of our evolution through our use of technology.

Transhumanism

Shortly after starting my PhD, I came across a newly established organisation known as the World Transhumanist Association (WTA), now known as Humanity+ (H+), which was founded by Nick Bostrom and David Pearce.

Point 8 of the Transhumanist Declaration states:

We favour allowing individuals wide personal choice over how they enable their lives. This includes use of techniques that may be developed to assist memory, concentration, and mental energy; life extension therapies; reproductive choice technologies; cryonics procedures; and many other possible human modification and enhancement technologies.

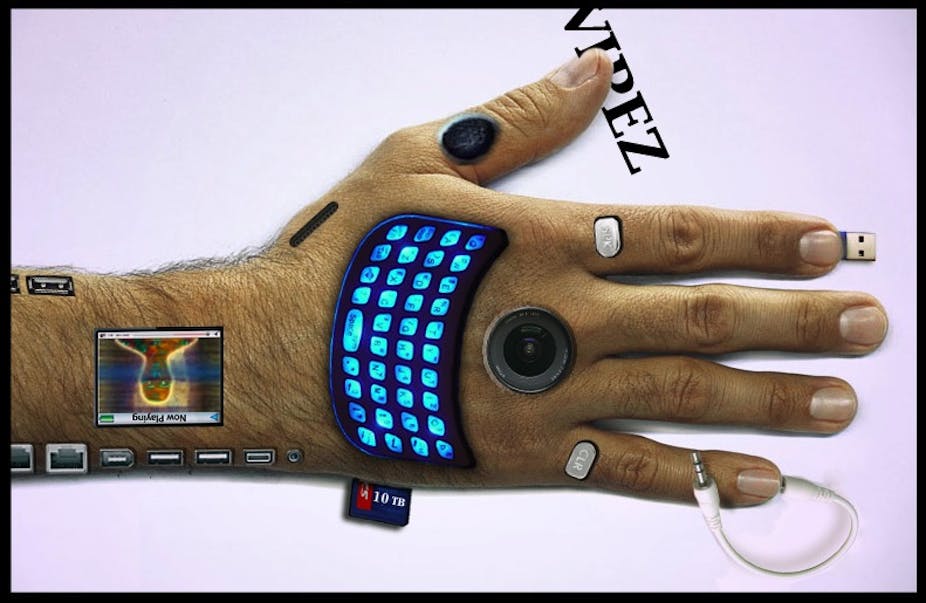

First let us consider briefly the traditional notion of a cyborg, part man/part machine, where technology can act to replace the need for human parts.

In this instance, some might willingly undergo surgical amputations for reasons of enhancement and longevity which have naught to do with imminent medical prosthesis.

This might include the ability to get around the “wetware” of the brain, enabling our minds to be downloaded onto supercomputers.

Homo electricus

Perhaps those who love the look and feel of their human body more than machinery would much rather contemplate a world dominated by a Homo Electricus – a human that will use electro-magnetic techniques to communicate ambiently with networks.

An electrophorus is thus one who becomes a bearer of technology, inviting nano and micro-scale devices into his or her body.

An Electrophorus might also use brain-wave techniques, such as the electroencephalogram (EEG), which measures the electrical activity of the brain in order to perform actions by thinking about them.

This might be the best approach to retaining our inner thoughts for recollection though there are myriad vital issues related to security, access control and privacy that must be addressed first.

Lifelogging

Twenty years ago, when I was still in high school, I would observe my headmaster, who was not all that fond of computers, walking around the playground carrying a tiny Dictaphone in his hands recording things for himself so that he could recollect them afterwards.

When I once asked him why he was engaging in this act, he said:

Ah … there are so many things to remember! Unless I record them I forget them.

He was surely onto something. His job required him to remember minute details which necessitated recollection.

Enter Steve Mann in the early 1990s, enrolled in a PhD at MIT Media Labs and embarking on a project to record his whole life – himself, everyone else, and mostly everything in his field of view, 24/7.

At the time it would have sounded ludicrous to want to record your “whole life”, as Professor Mann puts it. With Mann’s wearcam devices (such as Eyetap), one can walk around recording, exactly like a mobile CCTV. The wearer becomes the photoborg.

It is an act Mann has called “sousveillance”, which equates to “watching from below”.

This is as opposed to watching from above, like when we are surveilled by CCTV stuck on a building wall such as in George Orwell’s dystopic Nineteen Eighty-Four.

Since Mann’s endeavour there have been many who have chosen this kind of blackbox recorder lifestyle, and more recently even Google has thrown in their Glass Project equivalent, as shown in the video below.

My guess is that we are about to walk into an era of Person View systems which will show things on ground level through the eyes of our social network, beyond just Street View fly-throughs.

Other notable lifeloggers include Gordon Bell of Microsoft and Cathal Gurrin from Dublin City University.

MIT Researcher Deb Roy lifelogged his son’s first year of life (with exceptions) by wiring up his home with video cameras.

When we talk about big data, you can’t get any bigger than this. Chunky multimedia, chunky files of all types from a multitude of sensors, and chunky data ripe for analysis (by police, the government, your boss, and potentially anyone).

But I have often wondered where these individuals have drawn the line – at which occasions they choose to “switch off” the camera, and why.

But this glogging still does not satisfy the possibility that I might be able to retain and indeed download all my thoughts for retrieval later.

A series of still photographs and continuous footage does help me to remember people I’ve met, things I’ve shared, knowledge I’ve gained, and feelings I’ve experienced. But lifelogging is limited and cannot record the thoughts I have had at every moment in my life.

However, there is an innate problem with recording all my thoughts automatically with some kind of futuristic digital neural network: I would not want every thought I have ever had to be recorded.

Let’s face it, no-one is perfect and sometimes we think silly things that we would never want stored, shared with others or replayed back to us.

These are thoughts which are apt to be misconstrued or misinterpreted, even perhaps in an e-court. We also do and say silly things at times which may not be criminal but are not the best practice for family, friends, or colleagues, even strangers to witness.

And there are those moments of heartbreak and horror alike that we would never wish to replay for reasons we might be overcome with grief and become chronically depressed.

Is more than human better?

Evolving in ways that could better our lives can only be a good thing. But evolving to a stage where we humans become something other than human could be less desirable.

Dangers could include:

- electronic viruses

- virtual crimes (such as getting your e-life deleted, rewritten, rebooted or stolen)

- having your freedom and autonomy hijacked because you are at the mercy of so called smart grids

Whatever the likelihood of these potentialities, they too, together with all of the positives, need to be interrogated.

Ultimately we need to be extremely careful that any artificial intelligence we invite into our bodies does not submerge the human consciousness and, in doing so, rule over it.

Remember, in Mary Shelley’s 1816 novel Frankenstein, it is Victor Frankenstein, the mad scientist, who emerges as the true monster, not the giant who wreaks havoc when he is rejected.

The author would like to thank her fellow collaborator Dr MG Michael, previously an honorary senior fellow at the University of Wollongong, NSW, Australia, for his insights and valuable input on the initial draft of this article.