Robotic vehicles have been used in dangerous environments for decades, from decommissioning the Fukushima nuclear power plant or inspecting underwater energy infrastructure in the North Sea. More recently, autonomous vehicles from boats to grocery delivery carts have made the gentle transition from research centres into the real world with very few hiccups.

Yet the promised arrival of self-driving cars has not progressed beyond the testing stage. And in one test drive of an Uber self-driving car in 2018, a pedestrian was killed by the vehicle. Although these accidents happen every day when humans are behind the wheel, the public holds driverless cars to far higher safety standards, interpreting one-off accidents as proof that these vehicles are too unsafe to unleash on public roads.

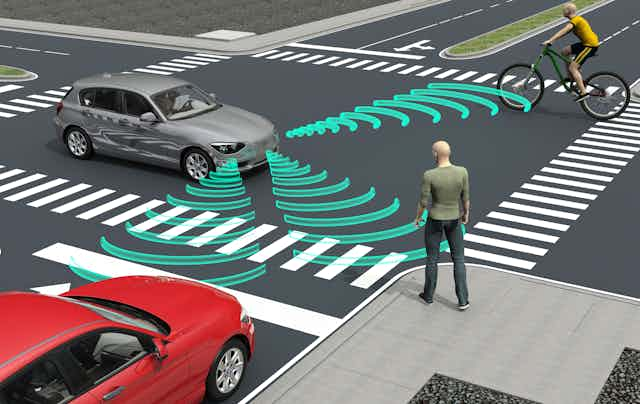

Programming the perfect self-driving car that will always make the safest decision is a huge and technical task. Unlike other autonomous vehicles, which are generally rolled out in tightly controlled environments, self-driving cars must function in the endlessly unpredictable road network, rapidly processing many complex variables to remain safe.

Inspired by the highway code, we’re working on a set of rules that will help self-driving cars make the safest decisions in every conceivable scenario. Verifying that these rules work is the final roadblock we must overcome to get trustworthy self-driving cars safely onto our roads.

Asimov’s first law

Science fiction author Isaac Asimov penned the “three laws of robotics” in 1942. The first and most important law reads: “A robot may not injure a human being or, through inaction, allow a human being to come to harm.” When self-driving cars injure humans, they clearly violate this first law.

Read more: Are self-driving cars safe? Expert on how we will drive in the future

We at the National Robotarium are leading research intended to guarantee that self-driving vehicles will always make decisions that abide by this law. Such a guarantee would provide the solution to the very serious safety concerns that are preventing self-driving cars from taking off worldwide.

AI software is actually quite good at learning about scenarios it has never faced. Using “neural networks” that take their inspiration from the layout of the human brain, such software can spot patterns in data, like the movements of cars and pedestrians, and then recall these patterns in novel scenarios.

But we still need to prove that any safety rules taught to self-driving cars will work in these new scenarios. To do this, we can turn to formal verification: the method that computer scientists use to prove that a rule works in all circumstances.

In mathematics, for example, rules can prove that x + y is equal to y + x without testing every possible value of x and y. Formal verification does something similar: it allows us to prove how AI software will react to different scenarios without our having to exhaustively test every scenario that could occur on public roads.

One of the more notable recent successes in the field is the verification of an AI system that uses neural networks to avoid collisions between autonomous aircraft. Researchers have successfully formally verified that the system will always respond correctly, regardless of the horizontal and vertical manoeuvres of the aircraft involved.

Highway coding

Human drivers follow a highway code to keep all road users safe, which relies on the human brain to learn these rules and applying them sensibly in innumerable real-world scenarios. We can teach self-driving cars the highway code too. That requires us to unpick each rule in the code, teach vehicles’ neural networks to understand how to obey each rule, and then verify that they can be relied upon to safely obey these rules in all circumstances.

However, the challenge of verifying that these rules will be safely followed is complicated when examining the consequences of the phrase “must never” in the highway code. To make a self-driving car as reactive as a human driver in any given scenario, we must program these policies in such a way that accounts for nuance, weighted risk and the occasional scenario where different rules are in direct conflict, requiring the car to ignore one or more of them.

Such a task cannot be left solely to programmers – it’ll require input from lawyers, security experts, system engineers and policymakers. Within our newly formed AISEC project, a team of researchers is designing a tool to facilitate the kind of interdisciplinary collaboration needed to create ethical and legal standards for self-driving cars.

Teaching self-driving cars to be perfect will be a dynamic process: dependent upon how legal, cultural and technological experts define perfection over time. The AISEC tool is being built with this in mind, offering a “mission control panel” to monitor, supplement and adapt the most successful rules governing self-driving cars, which will then be made available to the industry.

We’re hoping to deliver the first experimental prototype of the AISEC tool by 2024. But we still need to create adaptive verification methods to address remaining safety and security concerns, and these will likely take years to build and embed into self-driving cars.

Accidents involving self-driving cars always create headlines. A self-driving car that recognises a pedestrian and stops before hitting them 99% of the time is a cause for celebration in research labs, but a killing machine in the real world. By creating robust, verifiable safety rules for self-driving cars, we’re attempting to make that 1% of accidents a thing of the past.