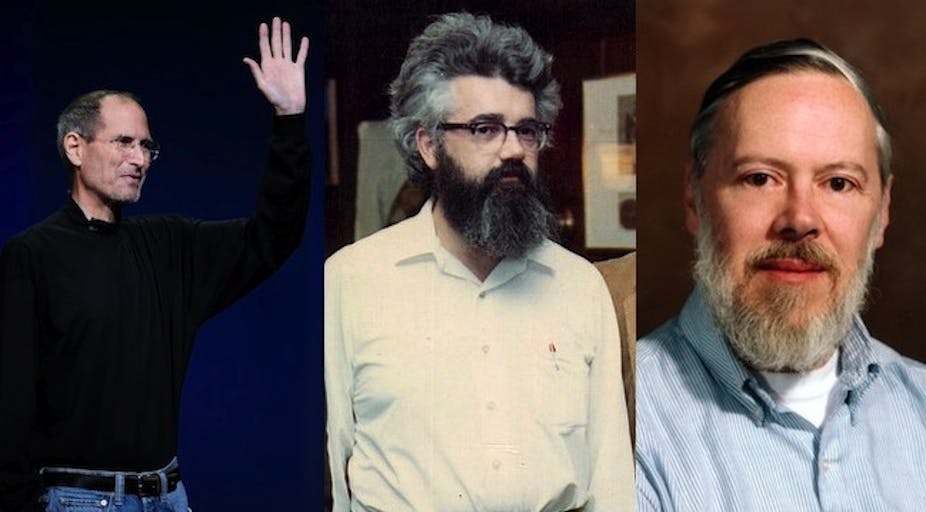

Last month saw the passing of three pioneers of the information age – an age we more or less take for granted now. These luminaries were [John McCarthy](http://en.wikipedia.org/wiki/John_McCarthy_(computer_scientist), Dennis Ritchie and, of course, Steve Jobs.

Of the three in this cluster, only the latter’s demise registered as a zeitgeist moment worthy of the death of JFK or Princess Diana.

Gossip-laden reminiscences are still percolating through the press in the wake of the Apple co-founder’s demise: What did Jobs think of Bill Gates and vice-versa?

That in itself is a metric of the spirit of our times, an era when the “geek chic” of gadget culture has become a badge of fashion, rather than the subject of derision.

Why has the public mourning for Jobs been so evident and sustained in comparison to the quiet exits of McCarthy, who died last week at the age of 84, and Ritchie, who was 70 when he died last month?

I teach elements of computing history in a variety of IT courses at tertiary level and all three are heroic figures in the annals of technology.

John McCarthy: pioneer of Artificial Intelligence

Computer scientist John McCarthy coined the term “Artificial Intelligence” in the late 1950s and through his work speculated that one day machines would mimic or even surpass feats of human intelligence.

A decade into the 21st century, McCarthy’s visions of intelligent computers are still in the realm of science fiction, so A.I. as an acronym was fast consigned to the curio bin to be replaced by more conservative terms such as “intelligent agents”.

If computers could act today as they do in the movies, such as HAL 9000 from 2001: A Space Odyssey, McCarthy would have been lauded as an intellectual hero in the Einstein mould.

(Amazing fact of the day: in the 1960s John McCarthy advanced the concept of computing time-sharing, which with a few tweaks here and there has been re-imagined in this day and age as the somewhat over-hyped “cloud computing”.)

Dennis Ritchie: father of programming

Dennis Ritchie was supposed to be solving the problems of the US telephone networks during his long tenure as a research scientist at the late, lamented Bell Labs, but instead he created the C programming language, arguably the first dialect written in the future present.

Along with colleague Ken Thompson, Ritchie wrote the UNIX operating system using his beloved C language, and the rest is history.

In the proverbial sense, a computer without an adequate operating system is about as useful as a rubber razor blade and UNIX proved to be the seed that generated the open source movement in the guise of LINUX.

Both C and UNIX are 70s icons that resonate in much of the technology we use today. For these achievements Dennis Ritchie received the National Medal of Technology from Bill Clinton, but I suspect his real satisfaction was in being able to work in a corporate environment that allowed him to creatively tinker (and have fun) with ideas that may have been at times counter to core business.

Google’s success in the 21st century is partly due to the fact that it too allows its innovators to function akin to Renaissance thinkers in the spirit of Ritchie and his peers.

Steve Jobs: da Vinci of our times

Steve Jobs, too, was a modern, entrepreneurial version of Leonardo da Vinci. He was a latent polymath with idiosyncratic tastes.

Apple’s rise as the world’s dominant “cool” brand of information technology is due in part to its reawakening of the bygone notion that beauty coupled with engineering design can guide a richer user experience, one that’s driven through emotion as well as necessity.

Jobs taught us it was hip to love technology. But McCarthy and Ritchie gave us, in their own ways, technology we could really love.