Whenever an unfavourable political opinion poll comes out, you can count on one thing: at least one politician saying they never pay attention to polls. And so it goes for university leaders when the results are in from world university rankings.

The Times Higher Education (THE) World University Rankings 2013-14 released today were not universally good news for Australian universities. While some improved their rank, the overall result was less encouraging.

The University of Melbourne lost six places, going from 28 to 34; while the ANU went from 37 to 48 and the University of Adelaide dropped out of the top 200 altogether.

The THE’s press release was blunt in its assessment, saying:

After a strong 2012-13, Australia has fallen back to earth with a bump.

And now for many universities, the ritual dance around a rankings release will proceed with vigour. Universities will variously welcome or dismiss the result, depending on the good or bad news.

But do university rankings really mean much for the institutions involved - beyond something to add to glossy advertisements? And do ever new rankings and university measures just mean more music for universities to dance to?

Read more: University rankings show Asian rise and Australian slip

Many different ways to be ranked

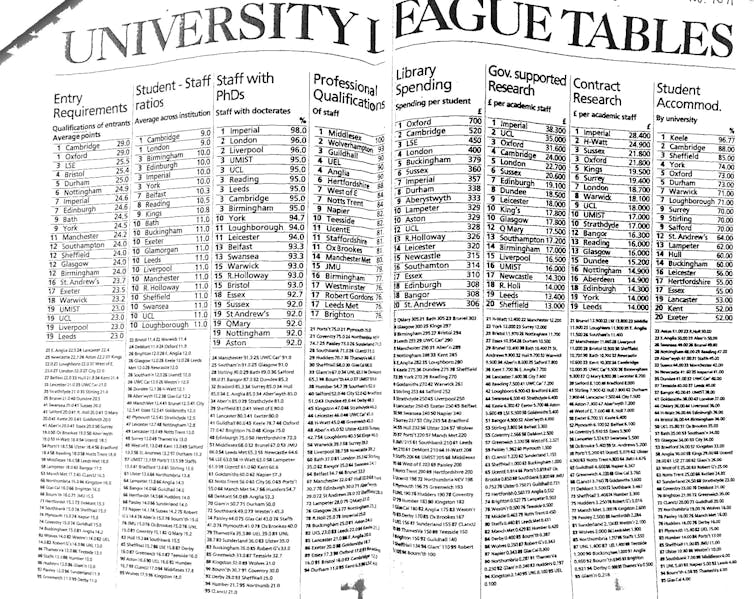

There are clearly many ways to measure what universities do – from research spending to student accommodation, as this UK University League Table from 30 years ago shows.

Increasingly, there are more specific measures that try to capture some novel aspect of university offerings and the list of large-scale rankings published around the world seems to be getting longer.

The THE’s Alma Mater Index: Global Executives 2013, for example, measures degrees awarded to CEOs, alongside the number of CEO alumni and the revenue of their companies. This begs the question of whether such a measure tells us more about perceived prestige than anything else.

Perhaps unsurprisingly, those US universities that rank well in other lists are also heavily represented on the Alma Mater Index. But so are other institutions that do not rank as highly, though they are often ones that are prestigious in a particular nation.

A new European experiment in university rankings is U-Multirank, which measures and ranks different dimensions of universities, such as teaching and learning, research, knowledge transfer, international orientation and regional engagement. It shows differences between institutional focus and allows similarly profiled universities to be better compared.

Here in Australia, a project by the LH Martin Institute and the Australian Council for Education Research (ACER) looks at the diversity of Australia’s institutions today, using evidence-based profiles and the method employed by U-Multirank.

Also in Australia, there is the My University website and the Good Universities Guide. With the variety of rankings and measures on offer, it appears that there is abundant data to compare universities.

The international student market

Rankings, such as the THE world rankings, attract increasing press coverage. There are legitimate fears that a change in ranking affects international student preferences, for both individual institutions and the Australian higher education sector.

Predicting the exact impact that rankings have on the international student market is fraught. There are several factors that appear to affect the attractiveness of Australian higher education - such as the exchange rate, changing visa arrangements and the international press reporting of Australia as a study destination.

Fees from international students effectively cross-subsidise much research and, at times, domestic teaching in most universities. Estimates find that on average each international student contributes around A$5100 per year.

Universities can ill-afford to lose this critical revenue.

By recent count, Australia has about 7% of the international student market, a very good proportion compared to our share of world population. But higher education is becoming more competitive globally and technology threatens existing campus-based teaching.

Countries with a strong history of higher education, such as the UK, are seeking a larger share of the world market. Technology, of which MOOCs – or Massive Open Online Courses – are flavour of the month, could dramatically change the attractiveness of campus based teaching.

So if a change in rankings will make Australia less attractive to international students, those in universities might legitimately fear some unwelcome consequences.

But we should be wary of arguing too strongly that a change in rankings alone will dramatically affect international (or domestic) student preferences. Many factors influence why students choose a particular university or country over another.

And universities should probably be extra careful to avoid any “magical thinking”: how universities do in various rankings (good or bad) may not affect the world they exist in at all.