If there is one iron law of economics it is this: people respond to incentives. Offer an “all you can eat” buffet and people eat a lot. Double the demerit points for speeding on a holiday weekend and fewer people speed. And if you make school funding contingent on achieving threshold numeracy and literacy test scores then those thresholds will likely be met.

The trouble is that it might not be because of genuine improvements in skills.

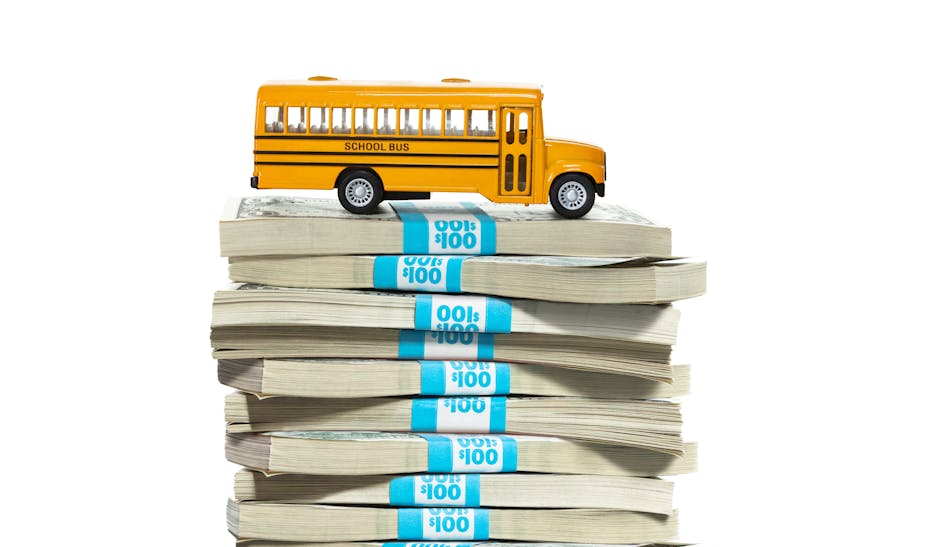

The Business Council of Australia’s recent report on innovation suggested tying federal funding of education to the achievement of threshold NAPLAN literacy and numeracy standards. It argued that a well-educated workforce is crucial for innovation and economic growth.

I couldn’t agree more about the need for an educated workforce. And I’m not one of these metricphobes who thinks that measuring performance is inherently evil, or that all league tables are bad. Far from it. But I do worry a lot about how incentives for teachers and students in schools work. There’s a swath of evidence that incentives in education lead to unintended outcomes — or even outright fraud.

Teaching to the test

Put yourself in the position of the person who is the boss of all school principals in a state and who is faced with the Business Council’s scheme. Some portion of those principals run schools whose kids are currently below the literacy and numeracy thresholds. What do you do? Faced with the possibility of large losses of funding you probably put pressure on those principals to get better results.

What levers do they have available? They can’t change the selection of kids in their schools — and if they did it would just shift the problem elsewhere.

They can’t change the kids’ parents or their classmates and the important influences they have. They can’t easily change the teachers in their schools.

What they can do is have teachers focus on testing outcomes rather than holistic ones. They can “teach to the test”.

Teaching to the test badly misallocates the most precious educational resource: teacher time. It focuses students on how to mechanically perform a small number of tasks.

As one illustration of how details matter, consider giving students cash incentives to do more homework. Setting aside the cost, this seems like a no brainer — but in fact it can lead to negative outcomes.

Research I conducted with Roland Fryer of the Harvard University economics department was designed to document this difficulty. We provided incentives to 5th-grade schoolchildren in Houston, Texas, to do mathematics homework problems. They got $2 for each mathematics objective they mastered, using take-home worksheets and in-class computer software to assess performance.

Because we randomised which schools got the software and incentives, and which schools just got the software, we could determine the causal effect of the incentives. Just like a randomised, controlled pharmaceutical trial with a treatment and a control group.

The answer? Kids with incentives did way more maths homework. And they did significantly better on end-of-year standardised tests in mathematics.

Great news? Not so fast. These gains in mathematics were, alas, almost exactly offset by a decline in performance on standardised reading tests.

Interestingly, measures of intrinsic motivation did not drop — financial incentives did not destroy the “joy of learning”. Instead, it was what economists called “effort substitution” — incentives in mathematics shifted effort away from reading and to mathematics.

And then there’s fraud

High-stakes testing for whole school districts or even whole states of Australia raises the disturbing possibility of outright test fraud.

Just last week the trial of 12 former educators accused of fraudulently inflating test scores began in Atlanta, Georgia. The group includes teachers, testing coordinators, principals and administrators.

At least one Atlanta school held pizza parties where they erased incorrect answers on tests and filled in the correct ones to boost kids’ scores. And not just a few. The prosecution claims that across the Atlanta public school system, in 2009 alone, 256,769,000 incorrect answers were “corrected”. Yep: one quarter of a billion!

But surely that wouldn’t happen here in Australia? Perhaps not, although I’ll bet that’s what folks in the great state of Georgia thought until recently.

Steve Levitt (of Freakonomics fame) has also documented widespread teacher cheating in Chicago public schools: from changing answers, to getting tests ahead of time, to teaching answers to precise questions. Atlanta is not a one-off.

Incentives are incredibly powerful — and in many settings carefully crafted explicit incentives can do a lot of good.

But education is a very complex environment, one in which explicit incentives have the potential to do more harm than good. If you pay for better NAPLAN scores then better NAPLAN scores you will get. But they won’t necessarily mean genuinely better literacy and numeracy. And you probably won’t like the side effects.