I’m sitting on a train when a group of football fans streams on. Fresh from the game – their team has clearly won – they occupy the empty seats around me. One picks up a discarded newspaper and chuckles derisively as she reads about the latest “alternative facts” peddled by Donald Trump.

The others soon chip in with their thoughts on the US president’s fondness for conspiracy theories. The chatter quickly turns to other conspiracies and I enjoy eavesdropping while the group brutally mock flat Earthers, chemtrails memes and Gwyneth Paltrow’s latest idea.

Then there’s a lull in the conversation, and someone takes it as an opportunity to pipe in with: “That stuff might be nonsense, but don’t try and tell me you can trust everything the mainstream feeds us! Take the moon landings, they were obviously faked and not even very well. I read this blog the other day that pointed out there aren’t even stars in any of the pictures!”

To my amazement the group joins in with other “evidence” supporting the moon landing hoax: inconsistent shadows in photographs, a fluttering flag when there’s no atmosphere on the moon, how Neil Armstrong was filmed walking on to the surface when no-one was there to hold the camera.

A minute ago they seemed like rational people capable of assessing evidence and coming to a logical conclusion. But now things are taking a turn down crackpot alley. So I take a deep breath and decide to chip in.

“Actually all that can be explained quite easily … ”

They turn to me aghast that a stranger would dare to butt into their conversation. I continue undeterred, hitting them with a barrage of facts and rational explanations.

“The flag didn’t flutter in the wind, it just moved as Buzz Aldrin planted it! Photos were taken during lunar daytime – and obviously you can’t see the stars during the day. The weird shadows are because of the very wide-angle lenses they used which distort the photos. And nobody took the footage of Neil descending the ladder. There was a camera mounted on the outside of the lunar module which filmed him making his giant leap. If that isn’t enough then the final clinching proof comes from the Lunar Reconnaissance Orbiter’s photos of the landing sites where you can clearly see the tracks that the astronauts made as they wandered around the surface.

"Nailed it!” I think to myself.

But it appears my listeners are far from convinced. They turn on me, producing more and more ridiculous claims. Stanley Kubrick filmed the lot, key personnel have died in mysterious ways, and so on …

The train pulls up in a station, it isn’t my stop but I take the opportunity to make an exit anyway. As I sheepishly mind the gap I wonder why my facts failed so badly to change their minds.

The simple answer is that facts and rational arguments really aren’t very good at altering people’s beliefs. That’s because our rational brains are fitted with not-so-evolved evolutionary hard wiring. One of the reasons why conspiracy theories spring up with such regularity is due to our desire to impose structure on the world and incredible ability to recognise patterns. Indeed, a recent study showed a correlation between an individual’s need for structure and tendency to believe in a conspiracy theory.

Take this sequence for example:

0 0 1 1 0 0 1 0 0 1 0 0 1 1

Can you see a pattern? Quite possibly – and you aren’t alone. A quick twitter poll (replicating a much more rigourous study) suggested that 56% of people agree with you – even though the sequence was generated by me flipping a coin.

It seems our need for structure and our pattern recognition skill can be rather overactive, causing a tendency to spot patterns – like constellations, clouds that looks like dogs and vaccines causing autism – where in fact there are none.

The ability to see patterns was probably a useful survival trait for our ancestors – better to mistakenly spot signs of a predator than to overlook a real big hungry cat. But plonk the same tendency in our information rich world and we see nonexistent links between cause and effect – conspiracy theories – all over the place.

Peer pressure

Another reason we are so keen to believe in conspiracy theories is that we are social animals and our status in that society is much more important (from an evolutionary standpoint) than being right. Consequently we constantly compare our actions and beliefs to those of our peers, and then alter them to fit in. This means that if our social group believes something, we are more likely to follow the herd.

This effect of social influence on behaviour was nicely demonstrated back in 1961 by the street corner experiment, conducted by the US social psychologist Stanley Milgram (better known for his work on obedience to authority figures) and colleagues. The experiment was simple (and fun) enough for you to replicate. Just pick a busy street corner and stare at the sky for 60 seconds.

Most likely very few folks will stop and check what you are looking at – in this situation Milgram found that about 4% of the passersby joined in. Now get some friends to join you with your lofty observations. As the group grows, more and more strangers will stop and stare aloft. By the time the group has grown to 15 sky gazers, about 40% of the by-passers will have stopped and craned their necks along with you. You have almost certainly seen the same effect in action at markets where you find yourself drawn to the stand with the crowd around it.

The principle applies just as powerfully to ideas. If more people believe a piece of information, then we are more likely to accept it as true. And so if, via our social group, we are overly exposed to a particular idea then it becomes embedded in our world view. In short social proof is a much more effective persuasion technique than purely evidence-based proof, which is of course why this sort of proof is so popular in advertising (“80% of mums agree”).

Social proof is just one of a host of logical fallacies that also cause us to overlook evidence. A related issue is the ever-present confirmation bias, that tendency for folks to seek out and believe the data that supports their views while discounting the stuff that doesn’t. We all suffer from this. Just think back to the last time you heard a debate on the radio or television. How convincing did you find the argument that ran counter to your view compared to the one that agreed with it?

The chances are that, whatever the rationality of either side, you largely dismissed the opposition arguments while applauding those who agreed with you. Confirmation bias also manifests as a tendency to select information from sources that already agree with our views (which probably comes from the social group that we relate too). Hence your political beliefs probably dictate your preferred news outlets.

Of course there is a belief system that recognises logical fallacies such as confirmation bias and tries to iron them out. Science, through repetition of observations, turns anecdote into data, reduces confirmation bias and accepts that theories can be updated in the face of evidence. That means that it is open to correcting its core texts. Nevertheless, confirmation bias plagues us all. Star physicist Richard Feynman famously described an example of it that cropped up in one of the most rigorous areas of sciences, particle physics.

“Millikan measured the charge on an electron by an experiment with falling oil drops and got an answer which we now know not to be quite right. It’s a little bit off, because he had the incorrect value for the viscosity of air. It’s interesting to look at the history of measurements of the charge of the electron, after Millikan. If you plot them as a function of time, you find that one is a little bigger than Millikan’s, and the next one’s a little bit bigger than that, and the next one’s a little bit bigger than that, until finally they settle down to a number which is higher.”

“Why didn’t they discover that the new number was higher right away? It’s a thing that scientists are ashamed of – this history – because it’s apparent that people did things like this: When they got a number that was too high above Millikan’s, they thought something must be wrong and they would look for and find a reason why something might be wrong. When they got a number closer to Millikan’s value they didn’t look so hard.”

Myth-busting mishaps

You might be tempted to take a lead from popular media by tackling misconceptions and conspiracy theories via the myth-busting approach. Naming the myth alongside the reality seems like a good way to compare the fact and falsehoods side by side so that the truth will emerge. But once again this turns out to be a bad approach, it appears to elicit something that has come to be known as the backfire effect, whereby the myth ends up becoming more memorable than the fact.

One of the most striking examples of this was seen in a study evaluating a “Myths and Facts” flyer about flu vaccines. Immediately after reading the flyer, participants accurately remembered the facts as facts and the myths as myths. But just 30 minutes later this had been completely turned on its head, with the myths being much more likely to be remembered as “facts”.

The thinking is that merely mentioning the myths actually helps to reinforce them. And then as time passes you forget the context in which you heard the myth – in this case during a debunking – and are left with just the memory of the myth itself.

To make matters worse, presenting corrective information to a group with firmly held beliefs can actually strengthen their view, despite the new information undermining it. New evidence creates inconsistencies in our beliefs and an associated emotional discomfort. But instead of modifying our belief we tend to invoke self-justification and even stronger dislike of opposing theories, which can make us more entrenched in our views. This has become known as the as the “boomerang effect” – and it is a huge problem when trying to nudge people towards better behaviours.

For example, studies have shown that public information messages aimed at reducing smoking, alcohol and drug consumption all had the reverse effect.

Make friends

So if you can’t rely on the facts how do you get people to bin their conspiracy theories or other irrational ideas?

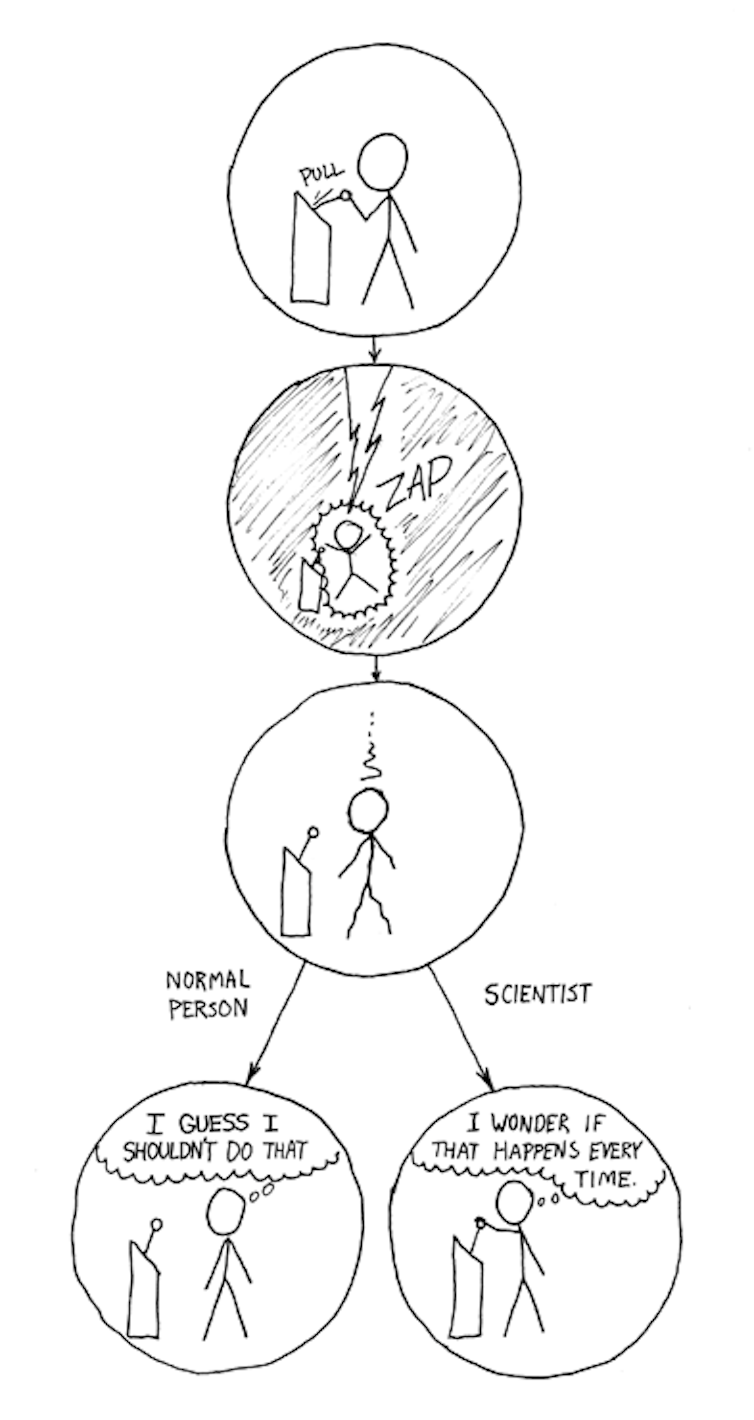

Scientific literacy will probably help in the long run. By this I don’t mean a familiarity with scientific facts, figures and techniques. Instead what is needed is literacy in the scientific method, such as analytical thinking. And indeed studies show that dismissing conspiracy theories is associated with more analytic thinking. Most people will never do science, but we do come across it and use it on a daily basis and so citizens need the skills to critically assess scientific claims.

Of course, altering a nation’s curriculum isn’t going to help with my argument on the train. For a more immediate approach, it’s important to realise that being part of a tribe helps enormously. Before starting to preach the message, find some common ground.

Meanwhile, to avoid the backfire effect, ignore the myths. Don’t even mention or acknowledge them. Just make the key points: vaccines are safe and reduce the chances of getting flu by between 50% and 60%, full stop. Don’t mention the misconceptions, as they tend to be better remembered.

Also, don’t get the opponents gander up by challenging their worldview. Instead offer explanations that chime with their preexisting beliefs. For example, conservative climate-change deniers are much more likely to shift their views if they are also presented with the pro-environment business opportunities.

One more suggestion. Use stories to make your point. People engage with narratives much more strongly than with argumentative or descriptive dialogues. Stories link cause and effect making the conclusions that you want to present seem almost inevitable.

All of this is not to say that the facts and a scientific consensus aren’t important. They are critically so. But an an awareness of the flaws in our thinking allows you to present your point in a far more convincing fashion.

It is vital that we challenge dogma, but instead of linking unconnected dots and coming up with a conspiracy theory we need to demand the evidence from decision makers. Ask for the data that might support a belief and hunt for the information that tests it. Part of that process means recognising our own biased instincts, limitations and logical fallacies.

So how might my conversation on the train have gone if I’d heeded my own advice… Let’s go back to that moment when I observed that things were taking a turn down crackpot alley. This time, I take a deep breath and chip in with.

“Hey, great result at the game. Pity I couldn’t get a ticket.”

Soon we’re deep in conversation as we discuss the team’s chances this season. After a few minutes’ chatter I turn to the lunar landing conspiracy theorist “Hey, I was just thinking about that thing you said about the moon landings. Wasn’t the sun visible in some of the photos?”

He nods.

“Which means it was daytime on the moon, so just like here on Earth would you expect to see any stars?”

“Huh, I guess so, hadn’t thought of that. Maybe that blog didn’t have it all right.”