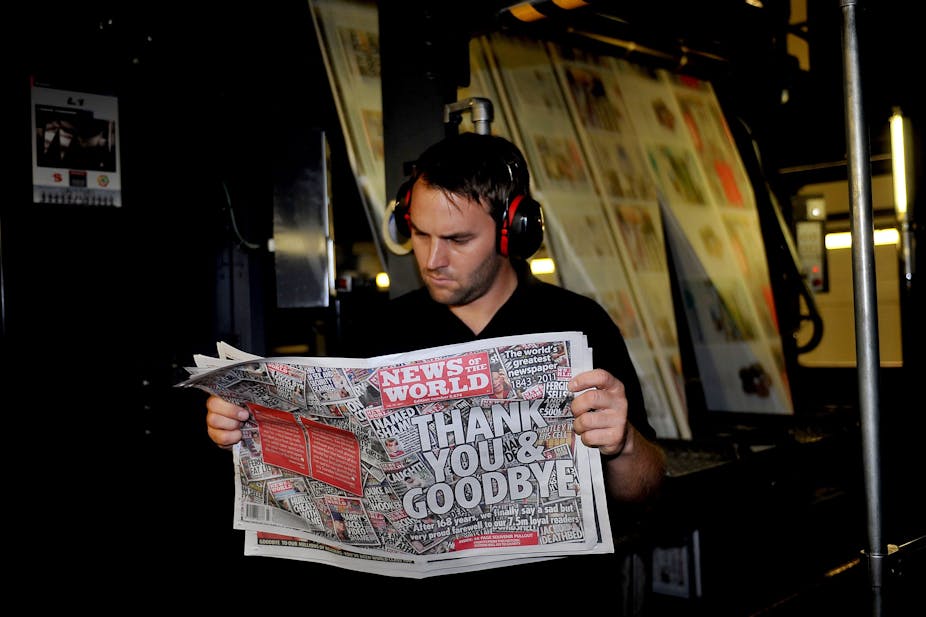

So The Guardian has now retracted its earlier reports that News of the World journalists had deleted Milly Dowler’s voicemails. Those journalists hacked the dead girl’s phone but they may not have deleted any messages.

Any genuine attempt to correct an earlier mistake is to be commended, but the question arises: will this correction change how people perceive the actions of those journalists?

The answer that cognitive science gives is: probably not.

Many Americans still don’t believe that Obama was born in the US.

Many Australians still reject the fact that no children were ever thrown overboard by asylum seekers.

GPs in the UK and elsewhere still struggle to convince parents the MMR vaccination is safe.

People seem inherently reluctant to update their memories and un-believe something they once believed, even if it turns out to be a myth. But why?

First of all, there are effects of people’s pre-existing beliefs and attitudes, which bias information processing. People are very good at cherry-picking information that confirms their worldviews, while dismissing information that doesn’t. So to some degree people will continue to rely on misinformation if it supports their worldview, and hence their sense of identity.

But apart from these biases, what about lingering misinformation effects in people who don’t hold strong beliefs?

Psychological research has given two explanations why such misinformation persists. These explanations are not mutually exclusive but operate on different levels.

One goes like this: when people learn about an event, they build a mental representation of that event, containing the gist of what happened — for example, A caused B. People use this mental model to later recall the event.

If a key piece of information is later retracted, people are left with a gap in their model. This can be confusing: “If A never happened, how could B happen?” Not knowing what is going on, and having conflicting ideas about something, feels uncomfortable.

When reasoning about the event, people will therefore often use the easily available misinformation (A), even when they accurately remember its retraction.

In other words, people often prefer an incorrect model of the world over an incomplete model of the world. So as long as the retraction is not accompanied by a plausible alternative explanation that can fill this gap, the retraction will be rather ineffective.

For example, the Guardian’s retraction did not give a convincing explanation as to how Milly Dowler’s voicemails were deleted, merely suggesting they may have been deleted automatically.

Without a convincing alternative, people will continue to refer to the initial explanation, despite the retraction. (Other sources make the automatic deletion scenario more plausible by citing the phone company’s 72-hour deletion policy, which will make the retraction more efficient. However, those sources now also seem to forget that deletions aside, the journalists at News of the World did hack a missing school girl’s phone — thus trying to turn a partial correction into an unfounded complete exoneration.)

The second explanation for why misinformation lingers assumes there are two different types of memory processes: automatic and controlled.

Automatic memory just supplies information that is activated by whatever we are doing at a given time. This information is not always valid and hence we need a checking mechanism.

Controlled memory is therefore used to ensure that what is supplied by automatic memory is actually accurate and relevant to the present context (and not, for example, something we saw in a movie, something we experienced in a different context — or a piece of outdated misinformation).

Controlled memory processes require cognitive effort. So if we are distracted or unmotivated, they can go wrong. Controlled memory is also prone to interference, so things happening after the event will over time make it more difficult to remember exactly what really happened.

These cognitive processes lead to persistent effects of misinformation. Misinformation effects can be counteracted (see here for some practical tips) but they are difficult to avoid.

In addition to these purely cognitive factors, emotional factors influencing the communication of information play a big role in maintaining incorrect information. While plausibility and believability play a certain role in whether or not information is passed on, people simply love to pass on information that will generate an emotional reaction in the recipient, in particular if it will evoke fear or disgust.

A tiger roaming the streets of London, asylum seekers throwing their children overboard, or journalists deleting a teenage crime victim’s voicemail—these are the kinds of myths that go viral because people love spreading them.

Unfortunately, it is not only high-school students tweeting their friends, but also the media that disseminates misinformation. This can be due to genuine error, substandard fact-checking, a hidden agenda, or simply an attempt to increase sales.

Fact is, once it’s out there, the damage is done.