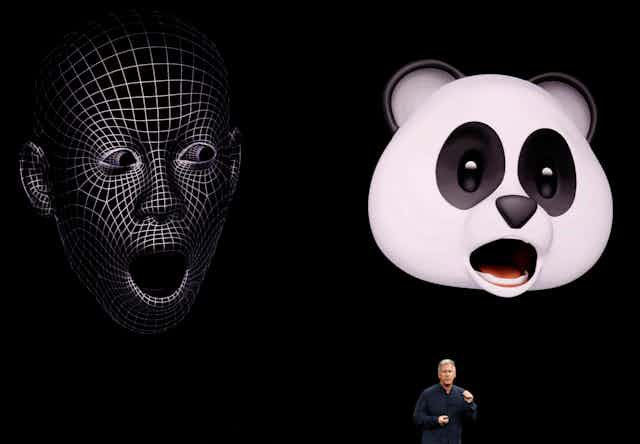

The iPhone X is here, which means Apple’s push into augmented reality (AR) begins in earnest.

AR offers a future where any graphic you see on a computer screen could be extracted and superimposed onto a real-world environment. Want to see how a new piece of furniture would look in your living room? Just point to a digital model on your smartphone screen. Want to examine an anatomical model from all angles? Digitally place it on your desk.

A whole range of tech players think this technology - variously called augmented reality, mixed reality or immersive environments - is set to create a truly digital-physical blended environment.

Read more: Never mind the iPhone X, battery life could soon take a great leap forward

That’s what Apple is betting on with ARKit, a platform that lets developers create AR experiences. It will roll out with iOS 11, the latest version of its operating system.

Almost all the big consumer technology brands have made investments in this new technology, but for now, Apple seems to be providing the most seamless, widely available software experience. Still, with Google and Microsoft also making plays in this space, an AR war is brewing.

A look back at commercial mixed reality

The push towards consumer AR has been growing for a while.

One of the first notable examples was Google Glass, which launched back in 2012.

Google Glass was a pair of spectacles that presented an extra screen to the user in the top right of their vision. This screen could be used to supply extra information, and when paired with a phone, Glass provided data about the surrounding environment. For example, it could give prompts when the user arrived at a certain location.

After a series of embarrassments, Google re-targeted Glass to focus on enterprise. But the company still has several other projects that fit into the AR space. They include a developer product called ARCore, similar to the Apple ARKit, which runs on some newer Android phones.

There’s also the Google Tango project, which allows you to hold up a mobile phone to an object, and then uses a depth-sensing camera on the phone to provide contextual information.

Microsoft has also been experimenting with mixed reality, and has started public development testing on an AR headset called HoloLens.

HoloLens is a computer in its own right and uses an integrated headset to present digital 3D models – which Microsoft refers to as holograms – on top of the existing world. It also has a depth-sensing camera (based on Microsoft Kinect technology), allowing the device to recognise items within the world.

So what’s important about Apple’s AR play?

What makes the Apple AR implementation special is that, unlike Microsoft HoloLens, for example, it’s designed to run on almost any mobile device.

While the new iPhone 8 and iPhone X do have new, powerful cameras, Apple’s software algorithms do much of the work. This means, for the first time, that any consumer using phones from iPhone 6s and up can have access to AR experiences – a feature that Apple showed off as part of its keynote presentation.

Apple also demonstrated these new capabilities at its Worldwide Developer Conference in June, using the popular game Pokémon Go.

The game originally included a basic AR mode that placed Pokémon in the world using the camera view, but it had no perception of what objects were in front of the camera. This led to situations where Pokémon Go players posted images of Pokémon in bizarre locations.

With the new AR system, Apple seems well placed to fix this issue. In the demo, Pokémon appeared in the right place – on the grass in a park, say, rather than floating in midair.

Read more: So you bought the new iPhone? Here are your rights if it breaks

Apple has dubbed this process “world tracking”. ARKit uses something called visual-inertial odometry, which combines the smartphone’s motion sensing hardware with software analysis of the scene, to impose graphics more precisely on the real world.

Apple boasted at its event that it now has the biggest AR system in the world. While this may be true, the true benefit is different: just like the original iPhone, which put a powerful computer in every pocket, the iPhone X gives us an AR system in every hand.