They said it was crazy – and in truth the European Commission’s billion-euro plan to build a computer model of the human brain appears to have been too ambitious. But after years of controversy and dispute, many neuroscientists believe that the Human Brain Project may no longer be doomed to failure.

Not only have the governance arrangements of the project been overhauled, the scientific programme is in the process of being now refocused. The hope is that the revamped project will be an information hub for neuroscientists, providing researchers with computational tools and mathematical models for understanding brain processes.

The Human Brain Project was originally conceived by the charismatic neuroscientist Henry Markram, who famously outlined his vision to build a brain in a supercomputer back in 2009. This idea rapidly gained momentum and in 2013, Markram became director of an EU flagship project aiming to “integrate research data from neuroscience and medicine in an effort to understand the human brain by simulation”.

The project would be funded by a billion euros spread over a decade and would, it was hoped, help support diagnosis and therapy of neurodegenerative diseases such as Alzheimer’s.

But the response from many neuroscientists was less than enthusiastic. One researcher dismissed the stated aim of creating a computer model of the human brain as “crazy” while another, more bluntly, described it as “crap”.

In July 2014, several hundred scientists eligible for funding through the project criticised it in an open letter. Among them were two of last year’s Nobel laureates in physiology or medicine: Edvard and May-Britt Moser.

The letter stated that, if the signatories’ concerns were not met, they would boycott the effort. It blasted the project’s “narrow” approach and accused it of “substantial failures” in openness and governance. In response, a mediation process was set up and in March this year the committee submitted its report, agreeing with much of the criticism. It concluded that the project, while “visionary” and “science-driven”, was “overly ambitious in relation to the simulation of the whole human brain and in relation to potential health outcomes”.

However, the committee fully backed the project’s continued development of neuroinformatics platforms, which provide scientists with computational tools and mathematical models for understanding brain processes. It also accepted the concerns about transparency and governance thus far and agreed to follow the recommendations by the researchers.

The project’s board of directors has approved a number of these, including the abolition of the three-person executive committee Markram was on. The report also catalysed a rewriting of the “framework partnership agreement”. This crucial agreement will outline the extent to which the project’s goals will change and is due to be released later this year.

Building blocks

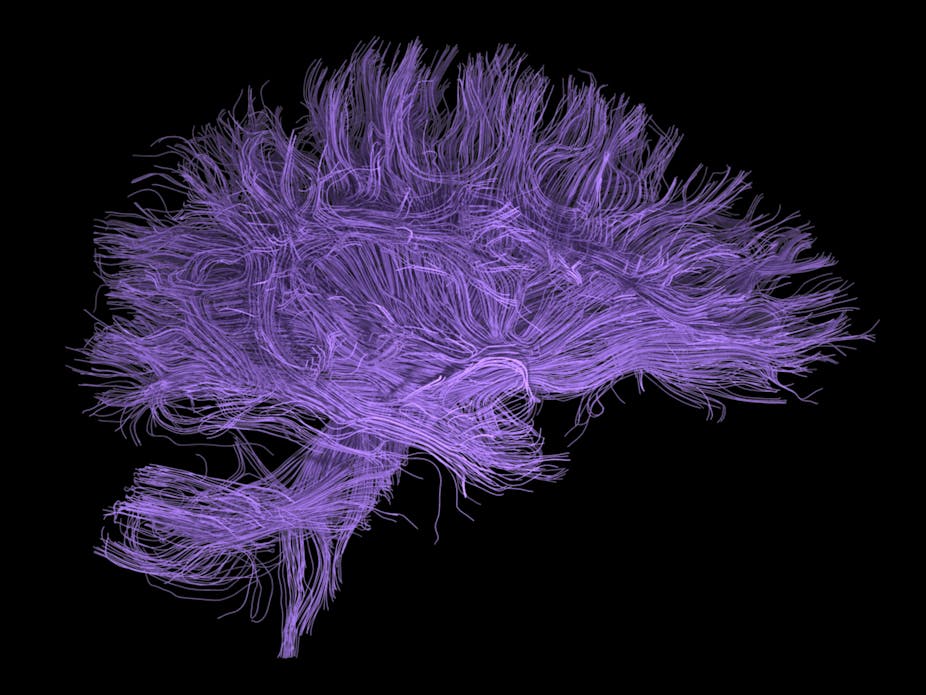

One of the reasons that the project is so controversial is its “bottom-up” approach to brain simulation. This involves taking the simplest building blocks of a complicated system, simulating each part mechanistically and watching as more complex behaviour emerges. In many scientific disciplines this has had big success but when applying this to the brain it immediately runs into problems.

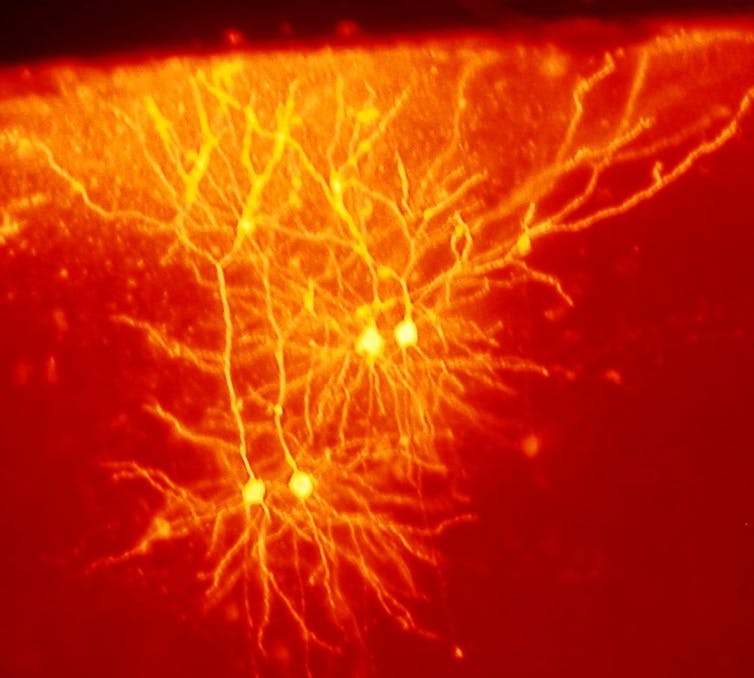

For example, how simple are the building blocks you should start with? Atoms are extremely fundamental but you’d need to take account of a hundred trillion of them just to simulate a single neuron. How about neurons? There are around a hundred billion of them in the human brain and simulation at that level might be possible, but in that case you may well be missing out key information.

Moreover, we lack sufficient experimental data on how neurons connect to each other and how this changes dynamically with time. And finally, even assuming the simulation is successful, it’s not clear we have the theoretical understanding to make sense of what the results mean.

That’s not to say that bottom-up brain simulation isn’t worthwhile. Instead, it is accepting that for many neuroscientific problems there are better ways of understanding what’s going on, such as through top-down models and sophisticated techniques for data analysis. And so we shouldn’t be narrowing the approach, we should be broadening it, enabling neuroscientists to run simulations relevant to them.

This is where the Human Brain Project will best fit in. Christof Koch, chief scientific officer of the Allen Institute for Brain Science, has described neuroscience as a “splintered field”. Laboratories across the world are heading off in different directions with a dizzying variety of tools, animal species, and behaviours, amounting to a “sociological Big Bang”.

The Seattle-based Allen Institute has aimed to address this problem with standardised large-scale databases such as a gene expression atlas of the entire mouse brain. But neuroscience still lacks an “information hub” where data from across the world is organised and analysed in a consistent way. It is imperative that the Human Brain Project works towards becoming this hub so that new insights into this data can be uncovered.

Positive signs

That the project is adapting to address the concerns raised is undoubtedly a positive sign. But implementation is key. If the overhyped bottom-up brain simulation remains the centrepiece of the project it remains likely to be mired in controversy. But if instead, it keeps to the new track of creating necessary tools to handle vast amounts of neuroscientific data, it may well prove to be popular.

With this view, it has the potential to complement another mega project, the White House BRAIN initiative announced by the Obama administration in 2013. This aims to radically improve the technologies used to record data in the brain. If the Human Brain Project can radically improve the technologies used to analyse and model this data, the controversy could one day turn into congratulation.