What’s the fastest thing you can see? Events that play out over a scale of minutes or seconds are easy to see. Events at much smaller timescales — milliseconds and shorter — can be entirely invisible to us. To see them, we have to perform a kind of temporal microscopy.

Magnification of time (to see the very fast in slow-mo) first became possible in California, in the area we now know as Silicon Valley. But this was long before the place became famous for its technology innovation.

It was the late 19th-century, and the area was occupied by oil wells, farming and winemaking. Most travel was by horse, and a common pastime was betting at the races.

Every punter was in it to win, and it was the quest of one particular horse owner, Leland Stanford, to achieve faster paces that spurred new technologies for capturing time.

The fight for full flight

Watching a champion thoroughbred at a fast gallop, you’ll notice the horse’s legs are blurred. The same will be true in photographs taken of the horse. In the case of the photograph, the camera’s film (or electronic sensor in digital cameras) averages together the incoming light over time.

In the case of biological vision, processes in your retina and brain average over time the signals evoked by the light, yielding a similar blurred effect.

In the 19th century, from everyday experience watching horses, it was obvious to people that unaided vision was not up to seeing the details of a galloping horse’s gait. A question of much debate was whether the four legs of a galloping horse are ever off the ground all at once.

People just couldn’t see whether or not a horse was ever in full flight. Enter Leland Stanford – founder of Stanford University – who realised better understanding of a horse’s gait might lead to some advantages over the competition.

Stanford resolved to settle the issue of whether all four galloping legs were ever simultaneously off the ground.

At the time, photographers commonly took a photo by first covering the lens with their hat, initiating the exposure by lifting their hat off, and terminating it by putting the hat back. The “snapshot” — rapid opening and closing of a shutter — had not been invented.

With the long exposures invariably created by these early photographers, the various positions occupied by a galloping horse’s legs all combined together into a blur. It was impossible to determine whether the horse’s legs are off the ground simultaneously – the information had been obliterated by the temporal blur.

To achieve shorter exposures, Stanford sought out an Englishman named Eadweard Muybridge, who had lately gained renown with spectacular photographs of landscapes in the American West. After some effort, Muybridge rigged a contraption involving a spring and two boards that slipped past each other.

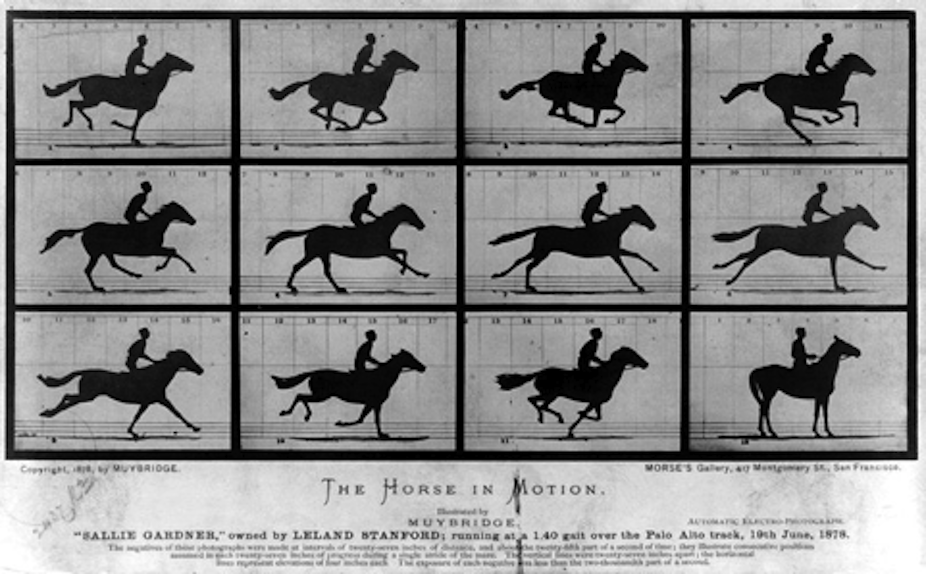

Muybridge’s device yielded a short, controlled exposure of film to light lasting perhaps a twentieth of a second. To create a series of images of Stanford’s galloping horse, Sallie Gardner, Muybridge lay out a whole battery of cameras and triggered them with trip-wires.

Muybridge’s result is displayed in the image at top of this article. The second and third images in the series show all four hooves of the horse off the ground. Thanks to simple advances in technology, a door had been opened onto the world of the too-fast-to-see.

Muybridge’s stopped-motion photographs quickly became popular. And for those not so interested in horses but rather in the slowness of human sight, the functioning of the camera provided a simple model of human limitations. The explanation for our inability to see whether a horse is ever in full flight seemed obvious: temporal blur created by the visual machinery inside our heads.

Brain blur

Temporal blur is the correct explanation of some perceptual speed limits. Our inability to see the continual flicker of fluorescent lights is one example.

Fluorescent lights, even well-functioning ones and very likely including those above your head if you work in an office, flicker on and off at a rate of over 100 times per second, much too fast for vision to resolve. Instead of perceiving the flicker, we see the average of the on phase with the off phase.

This temporal brain blur occurs over a long enough duration that it may possibly be sufficient to explain the difficulty perceiving the relative positions of the legs during a fast gallop.

Still, temporal blur cannot be the whole answer to the puzzle of the horse’s hooves. Our vision fails not only when a horse is at a full gallop, but also when it merely trots.

When trotting, a horse’s legs typically travel slow enough that the legs are not perceived as a blur – we witness the whole swing of the legs’ trajectory back and forth. Yet still the unaided eye is not up to deciding whether all four legs are ever off the ground simultaneously.

It’s a strange feeling, seeing the legs and their motions very clearly, but not knowing at any instant where exactly they are relative to each other. There is no counterpart to this human failing in a camera. You may be able to experience this phenomenon in the movie shown below.

A photograph reflects a single temporal limit, the exposure duration set by the camera’s shutter mechanism. In contrast, visual processing in the human brain reflects a collection of mechanisms working at different rates. Laboratory experiments over the last 20 years have teased apart some of these mechanisms to reveal their distinct speed limits.

This movie, I believe, makes manifest the slow mechanism in your head that prevents access to the simultaneous positions of the horses’ hooves.

When viewing the movie, you’ll see two sets of lines alternating between leftward and rightward tilted. The responsible perceptual mechanisms in your brain are easily fast enough to extract these oriented lines, even at the fast 12 pictures per second rate at which your computer should display the middle row of the movie.

Nevertheless, for that fast middle row, seeing whether the sets of lines are at each instant oppositely tilted or instead share their tilt is beyond our mental grasp. As with the horse’s hooves, here we cannot apprehend which things happen at the same time.

When we view a scene, subsequent to light hitting our retinas, our visual brains process local bits of the scene individually, rapidly extracting the shapes and colours present. These mechanisms do this without determining which shapes or colours occur at the same time elsewhere in the scene. Our laboratory results indicate that joining the elements — such as the horse’s hooves — in some cases requires a distinct process: a shift of visual attention.

Calling sight to attention

In its technical meaning, visual attention is a mental resource used to enhance processing of selected elements of the scene. Most often it enhances processing at the location where your eyes are pointed, but you can also select objects in the periphery of your vision. Many only notice this in the particular situations where one should avoid looking directly at something, such as when sharing an elevator with a stranger.

We can “check out” the stranger without looking directly, using shifts of our visual attention. Such shifts actually occur all the time, but normally attention flits about so automatically and rapidly that we don’t notice the movements. Scientists themselves have struggled to determine when shifts occur and what they do for our perceptions.

In our lab, to reveal the role of attention in determining the spatial relationships among objects in the scene, we used a display resembling two rings of coloured jellybeans. View the movie below from a forthcoming Current Biology article reporting our experiments.

People in the experiment were required to keep their eyes fixed on the grey dot at the centre, to be sure that shifts of attention rather than of the eyes was responsible for any selective processing. Varying the spinning rate of the display, we performed a test to find the maximum speed at which attention could follow the discs.

At rotation rates above the attentional speed limit (which unfortunately can’t be displayed on a webpage), the discs and their colours were still easily perceived, but one could no longer apprehend which colours were adjacent. It was as if we had peeled back one layer of normal visual consciousness.

Together with the results of an additional attentional test, this phenomenon suggests that a shift of attention is required to mentally link adjacent elements. When the colour rings revolve faster than the speed limit on following them with attention, one continues to perceive the colours but cannot see which are adjacent.

The situation may be the same with the rapid swing of a trotting horse’s legs.

More than a century ago, Muybridge was able to slow down external visual events so that they could be scrutinised with our relatively slow perceptual processes.

While this yielded new insights into the physical world at the millisecond scale, neither Muybridge’s camera nor any subsequent device have succeeded in capturing the mental world at fine timescales.

But with traditional techniques of psychological experiments and a little ingenuity, we have begun to reveal the brief episodes of processing that form our visual experience.

The research outlined in this story forms the basis of a new article in Current Biology.