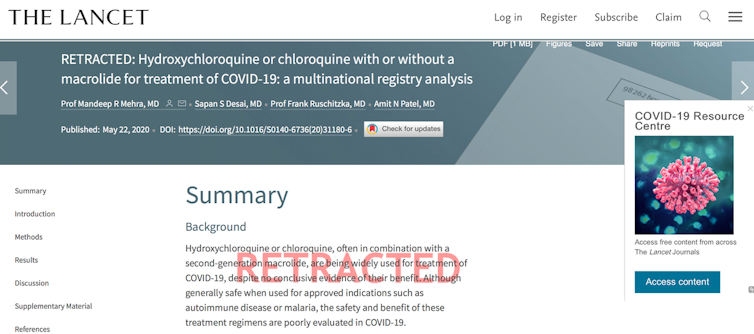

The world was stunned when The Lancet, medicine’s most respected scientific journal, retracted a blockbuster article within two weeks of its publication over data credibility.

The public, alarmed by a retraction about COVID-19 treatment from the world’s most stringent standard in scientific publication, coined it “Lancet-gate”.

The retracted article reported a chilling mortality risk among COVID-19 patients treated with anti-malarial drugs chloroquine (CQ) or hydroxychloroquine (HCQ). The article made many believe the drugs might have caused more deaths than cures.

The research seemed convincing. It was based on a gigantic sample size of close to 15,000 patients from 671 hospitals around the world who had been treated with the drugs. But it took only days for scientists and the public to spot something fishy, involving the credibility of both the data and the institution responsible for the data collection and management.

Why did The Lancet retract the article? And what can we learn from this?

Issues of data integrity and analysis

The article authors based their research on analysis of data provided by Surgisphere, a health database management company based in the United States. It claimed to have collected electronic health records from over 96,000 COVID-19 patients in 671 hospitals globally. The record was said to be updated automatically in real time.

The article at first prompted the World Health Organization to decide on a global suspension of the HCQ/CQ arm of ongoing Solidarity Trial research involving a hundred countries in the search for COVID-19 treatments.

It also prompted European Union governments to ban the use of HCQ for treating COVID-19 patients.

But scientists were suspicious about the integrity of the results and the data analysis. On May 28, 120 scientists from 24 countries sent an open letter to The Lancet.

They pointed out the following issues.

The research lacked analysis on confounding factors – variables that could potentially influence treatment outcomes – such as disease severity and the use of different HCQ/CQ dosages.

The authors did not comply with the standard scientific practice of making the raw data publicly available, even though The Lancet is one of the signatories of the Wellcome Statement on open access to COVID-19 research data.

The article did not mention specific approval from a research ethics committee and also did not acknowledge the contribution of the countries or hospitals where the data originated. The authors denied a request to disclose information on hospitals that contributed data.

Data on Australia were not compatible with government reports. The Lancet article reported 73 deaths in Australia as of April 21, when only 67 deaths had been officially announced. The main hospitals that treated COVID-19 patients in Australia have denied a connection with Surgisphere.

The article reported as many as 4,402 patients from Africa. Considering the continent’s level of health and research facilities, African scientists doubted their hospitals would have the capacity to provide electronic health records with a high level of detail for so many COVID-19 patients.

One of the tables comparing various health variables across all patients on different continents showed unusually small variations.

The mean daily dose of HCQ shown was 100mg higher than recommended by the US Food and Drug Administration, while 66% of the data came from that country.

In several locations, the research showed an improbable ratio for the use of chloroquine versus hydroxichloroquine. For example, it mentioned 49 patients in Australia received CQ and 50 received HCQ. CQ, which has much worse side effects, is no longer routinely used in Australia and its use would require complicated procedures.

In certain countries that were analysed the level of accuracy in the statistical analysis was too high, given the number of deaths in those countries.

The Lancet followed up the letter by launching an independent investigation. A day after this, it retracted the article.

The WHO also resumed the use of HCQ/CQ drugs in the Solidarity Trial.

Except for Sapan Desai, the CEO and founder of Surgisphere, the data supplier, all authors requested the retraction. The relatively quick retraction was prompted by Surgisphere’s refusal to disclose the raw data, effectively making The Lancet’s independent investigation impossible.

What do we learn?

Before an academic journal publishes a scientific manuscript, the journal asks other scientists working in a similar field to peer-review the article. The peer reviewer checks and critiques the research. However harsh the peer-review process, the reviewers will generally not delve deep into the smaller details of the raw data, especially if it involves a large and complex dataset.

That the article on the risk of anti-malarial drugs chloroquine (CQ) or hydroxychloroquine (HCQ) got through this process shows peer review is not entirely free from error. Unfortunately, despite its flaws, it is currently the only available way to ensure scientific rigour before publication.

The “Lancet-gate” case shows the quality of data is extremely important. The validity of the data largely determines the validity of the analysis. If the data obtained are reliable, the analysis can be trusted. In this case, because the reliability of the data source is doubtful, the analysis and interpretation are also doubtful.

This leads to another lesson: the need to push for open science. Research data should be made publicly accessible, which is still not a standard practice in scientific publication.

This case also reminds us scientific publication of a theory or research discovery does not instantly make it a part of science. A theory or research finding is published with the intention of sharing it with a wider audience, the public at large or the scientific community, by whom it can be further scrutinised. Although The Lancet article managed to qualify for a very high publication standard, it remained open for the public to further assess its true value.

In this regard, a theory or discovery can only be accepted as part of science after it is sustained through a long, arduous and open process of scrutiny and criticism. This has been going on for hundreds or even thousands of years in science. Indeed, this is the scientific process.

Scientists are still humans who are never entirely free from errors. There are scientists who miscalculated. There are scientists who used the wrong formula or theoretical concept for a set of scientific problems. There are also scientists who fabricate and falsify their findings.

The scientific community has established formal and informal processes to minimise these errors.

Formally, research institutions set up scientific committees to examine the scientific value of research and its benefits to mankind. There are also research ethics committees that rigorously examine the compliance of researchers with ethical standards including, among others, protection of study participants and data sharing.

Informally, true researchers generally appreciate a culture of openness, transparency and mutual criticism. It’s considered part of the process of science maturation. This process is achieved through scientific publication as well as interaction in scientific conferences, meetings or any other media.

Thinking and interacting, intellectually, are essential in solving mankind’s problems, such as the pandemic now engulfing our existence. In doing so, however, we need to remain vigilant to the possibility of making mistakes that could lead us to wrong conclusions.

Note: as of 17 June 2020, the WHO once again announced that the HCQ arm of the Solidarity Trial was stopped because the clinical trial data showed no benefit.