The COVID-19 global pandemic has been accompanied by misinformation about the virus, its origins and how it spreads.

One in seven Canadians thinks there is some truth to the claim that Bill Gates is using the coronavirus to push a vaccine with a microchip capable of tracking people. Those who believe this and other COVID-19 conspiracy theories are much more likely to get their news from social media platforms like Facebook or Twitter.

In extreme cases, conspiracy thinking spurred by online disinformation can result in hate-fuelled violence, as we saw in the insurrection at the U.S. Capitol, the Québec City mosque shooting, the Toronto van attack and the incident in 2020 where an armed man crashed his truck through the gates of Rideau Hall.

Read more: Coronavirus conspiracy theories are dangerous – here's how to stop them spreading

Moderate content

These and other events have placed pressure on social media platforms to label, remove and slow the spread of harmful, publicly viewable content. As a result of implemented responses to the spread of misinformation, Donald Trump was deplatformed during the final weeks of his presidency.

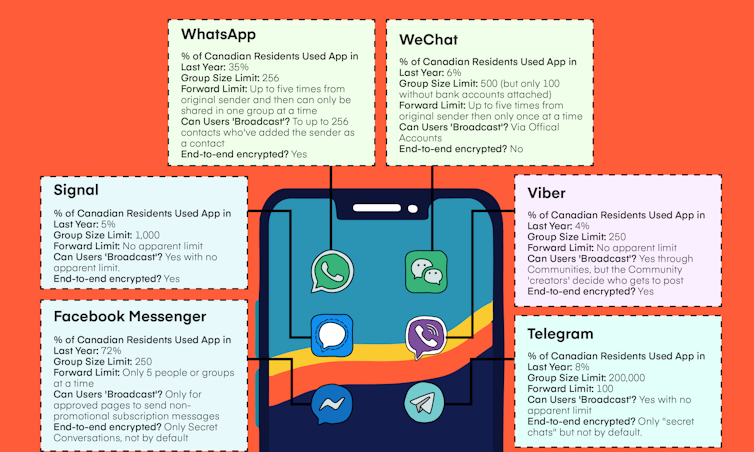

These discussions on content moderation have mainly centred around platforms where content is generally open and accessible to view, comment on and share. But what’s happening in those online spaces that aren’t open for all to see? It’s much harder to say. And perhaps not surprisingly, conspiracy theories are spreading on private messaging apps, like WhatsApp, Telegram, Messenger and WeChat, to spread harm.

By leveraging large groups of users and long chains of forwarded messages, false information can still go viral on private platforms.

White nationalists and other extremist groups are trying to use messaging apps to organize, and malicious hackers are using private messages to conduct cybercrime. False stories spreading on messaging apps have also led to real-world violence, as happened in India and the United Kingdom.

Trust and private communication

We conducted a survey of 2,500 Canadian residents in March 2021 and found that they’re increasingly using private messaging platforms to get their news.

Overall, 21 per cent said that they rely on private messages for news — up from 11 per cent in 2019. We also found that people who regularly receive their news through messaging apps are more likely to believe COVID-19 conspiracy theories, including the false claim that vaccines include microchips.

There is a level of intimacy in private messaging apps that’s different from news viewed on social media feeds or other platforms, with content shared directly by people we often know and trust. A majority of Canadians reported that they had a similar level of trust in the news they receive on private messaging apps as they do in the news from TV or news websites.

Our research also uncovered a uniquely Canadian phenomenon. As a multicultural society with many newcomers, the Canadian private messaging landscape is remarkably diverse. For example, people who have arrived in Canada in the last 10 years were more than twice as likely to use WhatsApp. Similarly, newcomers from China were five times more likely to use WeChat.

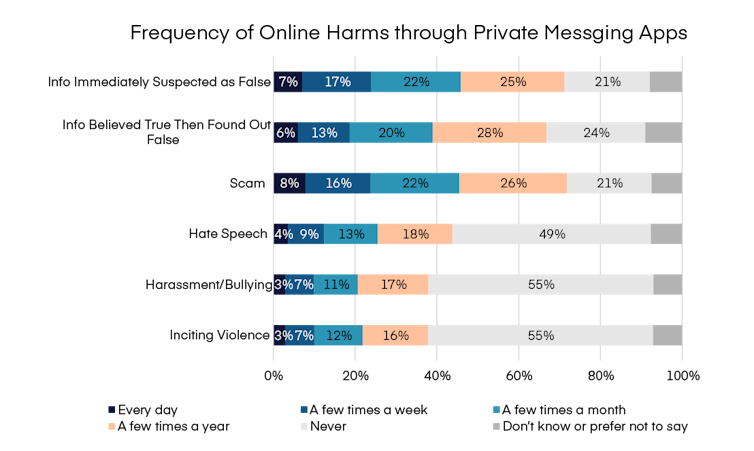

We also found that half of Canadians receive messages that they suspect are false at least a few times per month, and that one in four receive messages with hate speech at least monthly. These rates were higher among people of colour. Because different apps provide different ways of spreading and mitigating harmful content, each requires a tailored strategy.

Mitigating harm

Platforms and governments around the world are grappling with the tension between mitigating online harms and protecting the democratic values of free expression and privacy, particularly among more private modes of communication. This tension is only exacerbated by some platforms’ use of privacy-preserving end-to-end encryption that ensures only the sender and receiver can read the messages.

Some messaging apps have been experimenting with how to reduce the spread of harmful materials, including the introduction of limits on group sizes and on the number of times a message can be forwarded. WhatsApp is now testing a feature that nudges users to verify the source of highly forwarded messages by linking to a Google search of the message content. Some experts are also advancing the idea of adding warning labels to false news shared in messages — a concept that a majority (54 per cent) of Canadians supported when we described the idea.

However, there is certainly more that governments can do in this quickly moving area. More transparency is required from messaging platforms about how they’re responding to user reports of harmful material and what approaches they’re using to stall the spread of these messages. Governments can also support digital literacy efforts and invest in research about harms through private messaging in Canada.

As Canadians shift to more private modes of communication, policy needs to keep up to maintain a vibrant and cohesive democracy in Canada while protecting free expression and privacy.