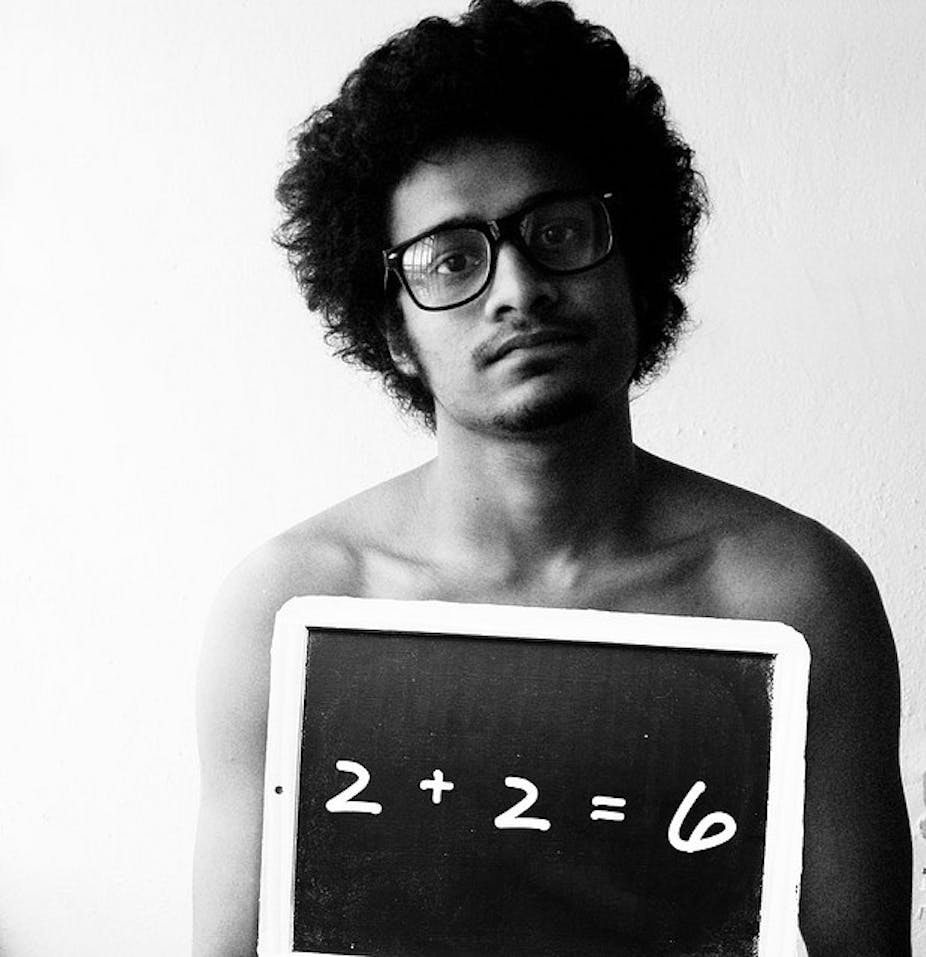

THE STATE OF SCIENCE: Fraud is the exception not the rule in science, but it happens, as one recent high-profile case showed. How does this occur, and what can mathematics bring to the equation? Jon Borwein and David H. Bailey explain.

From time to time, the scientific community is rocked by cases of scientific fraud. Needless to say, such incidents do little to instill confidence in a public that’s already predisposed to be skeptical of inconvenient scientific findings, including biological evolution and human-induced global warming.

One notable case of fraud came to light in 2002 when Bell Labs researcher Hendrik Schön, once described as a “rising star” in the field of nanoelectronics, was accused of fraud by a review panel consisting of several prominent scientists, including physicist Malcom Beasley of Stanford University.

Most of the 25 papers in question were published in prestigious journals such as Science, Nature and Applied Physics Letters.

More recently, in 2008 a Science article started:

“The only two peer-reviewed scientific papers showing that electromagnetic fields (EMFs) from cell phones can cause DNA breakage are at the center of a misconduct controversy at the Medical University of Vienna (MUV). Critics had argued that the data looked too good to be real, and in May a university investigation agreed, concluding that data in both studies had been fabricated and that the papers should be retracted.”

This month, Netherlands psychologist Diederik Stapel was accused of publishing “several dozen” articles with falsified data.

Stapel’s papers were certainly provocative. One claimed that disordered environments such as littered streets make people more prone to stereotyping and discrimination. After being challenged in an “editorial expression of concern” in Science, Stapel confessed that the allegations were largely correct.

How could such frauds have happened? Firstly, scientific investigation is premised on open enquiry and treating every new result as a potential fraud is both antithetical and destructive.

In general, false findings such as the cell phone case are easier to uncover than “prettifying” — which in some cases comes from enthusiastic assistants “cleaning” the data to assist the case.

This culture is certainly cultivated by media reports in which every advance must be a “breakthrough”. In Stapel’s case, he was able to operate for so long because he was “lord of the data”.

He did not make this data available for other researchers, a practice Jelte Wicherts of the University of Amsterdam termed “a violation of ethics rules established in the field”.

Counting on maths

It’s worth examining the role of mathematics in general, and statistics in particular, in the disclosure of these frauds. In the case of Stapel’s work, researchers found “anomalies” including suspiciously large experimental effects and a lack of outliers – observations that appear to deviate markedly from other members of the sample – in the data.

A lack of outliers and unlikely distributions are tell-tale signs of poorly-constructed artificial data – a situation similar to what is known in the trade as Benford’s law.

Even setting aside outright fraud, statistical sloppiness pervades some fields. This is especially true in clinical medical research and in the social sciences, where many of the researchers are poorly trained quantitatively.

In an analysis published this year by Hekte Wicherts and Marjan Bakker of the University of Amsterdam, about half of 281 psychology journal papers examined contained some statistical error, and about 15% had at least one error that would have changed the reported finding, “almost always in opposition to the authors’ hypothesis”.

Let us emphasise here – in case it’s not completely obvious – that scientific fraud is the exception, not the rule. Our cursory search of Science’s archive showed about half-a-dozen headline cases in the past ten years. Business, politics or law would not fair as well.

In any event, it’s clear that:

(a) more care needs to be taken in using statistical methods in scientific and mathematical research.

b) statistical methods can and should, to a greater extent, be used to detect fraud and manipulation of data (deliberate or not).

Perhaps the considerable attention drawn to recent incidents will lead to more rigorous analyses, and more circumspect behaviour by scientists. We shall see.

A version of this article first appeared on Math Drudge.

This is the seventh part of The State of Science. To read the other instalments, follow the links below.

- Part One: Does Australia care about science?

-

Part Two: What’s a scientist – a poker or a puffin?

- Part Three: Science can seem like madness, but there’s always a method

- Part Four: Express yourself, scientists – speaking plainly isn’t beneath you

- Part Five: Science is imperfect – you can be certain of that

- Part Six: Why do people reject science? Here’s why …

- Part Eight: Get real: taking science to the next generation of Einsteins

- Part Nine: Critically important: the need for self-criticism in science

- Part Ten: Please, sirs, can we have some more? Aussie scientists need fuel, not gruel

- Part Eleven: Scientists and politicians – the same but different?

- Part Twelve: Tweed or speed … a day in the life of a modern scientist

- Part Thirteen: Selling science: the lure of the dark side

- Part Fourteen: Way off balance: science and the mainstream media