Articles on Artificial intelligence (AI)

Displaying 461 - 480 of 1373 articles

The blocking of ChatGPT in Italy raises some important questions, including how to balance access to services with the need to protect children

Computer scientists are overwhelmingly present in AI news coverage in Canada, while critical voices who could speak to the current and potential adverse effects of AI are lacking.

Generative AI can seem like magic, which makes it both enticing and frightening. Scholars are helping society come to grips with the potential benefits and harms.

Oral exams have a history dating back more than 2,000 years – and could once again be a solution for universities to test their students’ knowledge.

Some some said AI would not just reach human-level intelligence, but would probably surpass it.

Generative AI is designed to produce the unforeseen, but that doesn’t mean developers can’t predict the types of social consequences it may cause.

Meta and Pico lead the field with their VR headsets, ChatGPT continues its inexorable rise and new engine developments are pushing the boundaries of the video game experience.

We may know what ChatGPT can do, but questions remain over who owns the copyright.

A scholar explains how artificial intelligence systems can revolutionize the way students learn.

Language model AIs are smooth talkers, but you shouldn’t rely on them to make important decisions. That’s because they have trouble telling the difference between a gain and a loss.

Large language models can’t understand language the way humans do because they can’t perceive and make sense of the world.

The user interfaces of AI chatbots, like ChatGPT, are designed to mimic natural human conversation. But in doing so, AI chatbots present as more trustworthy than they really are.

AI technology holds promise for education, but it will also likely exacerbate the digital divide and increase the online dominance of English.

Governments around the world have so far taken a light-touch approach. It’s not enough if we want to address the various potential harms of AI.

The government’s new white paper on AI regulation highlights opportunities for the country.

Pausing AI development will give our governments and culture time to catch up with and steer the rush of new technology.

A recent open letter calling for a temporary artificial intelligence development hiatus is more concerned with hypothetical risks about the future than the issues that are right in front of us.

As Russia’s war in Ukraine illustrates, the use of lethal automated weapons, or LAWS, can always be justified. Their ability to desensitize their users from the act of killing, however, shouldn’t be.

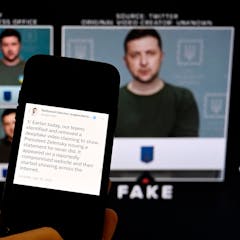

Powerful new AI systems could amplify fraud and misinformation, leading to widespread calls for government regulation. But doing so is easier said than done and could have unintended consequences.

Early research finds that people get just about the same gratification from sexting with a chatbot as they do with another human.