Articles on Bias

Displaying 1 - 20 of 181 articles

A study shows that Americans believe news organizations report the news inaccurately not because they are politically biased, but because they want to generate larger audiences and larger profits.

Recruiters are now routinely using AI to automate the screening of CVs and interview videos. But human bias already exists in the AI data – and it can even be heightened by the algorithm.

Just as human biases show up in machine learning systems, so, too, do people’s vagaries and vicissitudes.

ABC comedy series White Fever highlights a type of racism that is nuanced and hard to detect, but is just as harmful to people of colour.

It can be easy to mistake feelings like fear and anger as hate. When biases are acted out in harmful ways, however, speaking up can help stop hate from getting worse.

People are better able to see and correct biases in algorithms’ decisions than in their own decisions, even when algorithms are trained on their decisions.

Like all people, the way scientists see the world is shaped by biases and expectations, which can affect how they record and report. Rigorous research methods can minimize this effect.

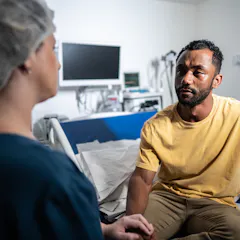

Many Black patients experience stark differences in how they’re treated during medical interactions compared to white patients.

AI has the potential to diminish the human experience in several ways. One particularly concerning threat is to the ability to make thoughtful decisions.

This bias in science journalism seems not to be due only to pragmatic concerns about time zones or the language spoken in the country where the scientist is based.

The use of large language models like ChatGPT is growing globally. These technologies are trained on datasets that recreate biases — as their use increases, their datasets must become more diverse.

Something to bear in mind if you find yourself swiping through profiles on a dating app later today.

Recent research suggests jurors are less likely to be lenient on attractive defendants than previously thought.

It has long been thought one couldn’t bend one’s intuition. Recent research reveals it is in fact possible to reduce bias through training.

Through action films, dramas and kids’ cartoons, right-wing activists are working to build their own alternative entertainment universe insulated from Hollywood’s purported liberal biases.

Sports researchers learned that conservative political leanings among state legislators lead to biases against transgender athletes among voters.

After decades of efforts to increase female representation in corporate decision-making bodies, few women are managing to take the reins of power.

Having a biased manager lowers productivity across the board – even for workers who aren’t targeted.

Personal bias, upbringing and even popular dramas can influence the way we evaluate political leadership. As election day nears, how might we make more balanced judgments?

While blatant discrimination is easy to condemn because of how obvious it is, there are subtler, more insidious forms that also need to be rooted out.